AMD’s Radeon R9 Fury X kicks ass.

It’s important to note that right up front, because AMD’s graphics division has had a rough year or so. The company’s been forced to watch Nvidia release not one, not two, but five new GeForce graphics cards—the entire GTX 900-series line—since the Radeon R9 285 launched last September. What’s more, those GeForce cards delivered so much performance and sipped so little power that all AMD could do in response was steeply slash prices of its Radeon R200-series graphics cards to stay competitive. And AMD’s “new” Radeon R300-series cards are basically just tweaked versions of the R200-series GPUs with more memory.

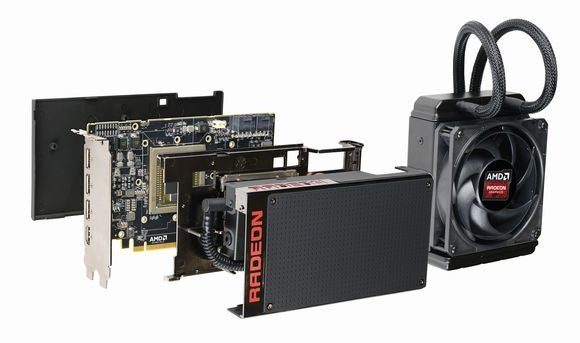

Through it all, the promise of the water-cooled Radeon R9 Fury X glimmered as the light at the end of the tunnel, first through unofficial leaks and then through official reveals. It’ll have cutting-edge high-bandwidth memory! It’ll have a new Fiji graphics processor with an insane 4,096 stream processors! It’ll have an integrated closed-loop water cooler! It’ll play 4K games and go toe-to-toe with Nvidia’s beastly Titan X and GTX 980 Ti!

And it’s all true. Every last bit of it. The Radeon R9 Fury X kicks ass.

It’s not quite the walk-off home run that Team Red enthusiasts were hoping for, however—and AMD’s claim that the Fury X is “an overclocker’s dream” definitely does not pass muster.

Let’s dig in.

AMD’s Radeon R9 Fury X under the hood

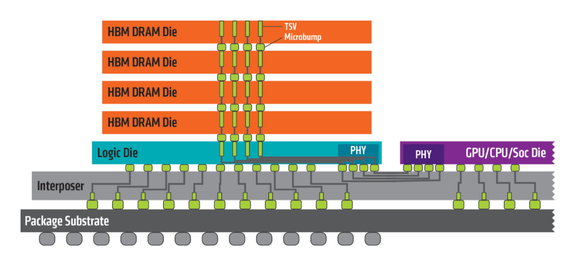

A diagram of AMD’s HBM implementation.

There isn’t much mystery to the Fury X’s technical specifications at this point. AMD long ago provided a deep-dive into the card’s HBM implementation and described the Fury X’s technical and design details with loving exactness just last week. We’ll cover the high points here, but check out our previous coverage if you’re looking for more details.

The most notable technical aspect of the Fury X is its use of high-bandwidth memory, making it the first graphics card to adopt HBM. AMD says it’s been developing the technology for seven years, and Nvidia’s not expected to embrace similar technology until 2016 at the earliest, when its Pascal GPUs launch.

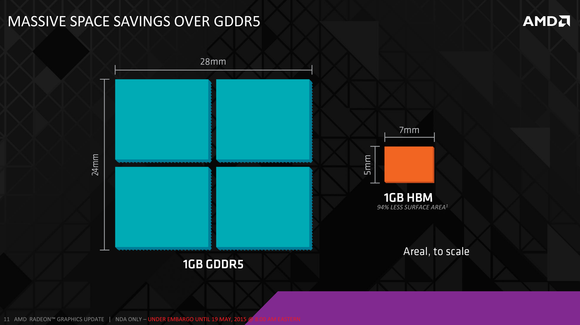

HBM stacks DRAM dies one atop the other, then connects everything with the GPU using “through-silicon vias” and “µbumps” (microbumps). The stacking lets 1GB of HBM consume a whopping 94-percent less on-board surface area than 1GB of standard GDDR5 memory, which enabled AMD to make the Fury X a full 30-percent shorter than the Radeon R9 290X.

While GDDR5 memory rocks high clock speeds (up to 7Gbps) and uses a smaller interface to connect the GPU—384-bit, or 512-bit in high-end graphics cards—HBM takes the opposite approach. The Fury X’s memory is clocked at a mere 1Gbps, but travels over a ridonkulously wide 4,096-bit bus to deliver effective memory bandwidth of 512GBps, compared to the GTX 980 Ti’s 336.5GBps. All that memory bandwidth makes for great 4K gaming, though it doesn’t give the Fury X a clear edge over the 980 Ti when it comes to games, as we’ll see later.

Technological limitations capped this first-gen HBM at just 4GB of capacity. While AMD CTO Joe Macri told us in May that’s all developers really need for now, it definitely proved to be a problem in our testing when playing games that gobbled up more than 4GB of RAM—Grand Theft Auto V, specifically. Gaming at 4K resolution can eat up memory fast once you’ve enabled any sort of anti-aliasing.

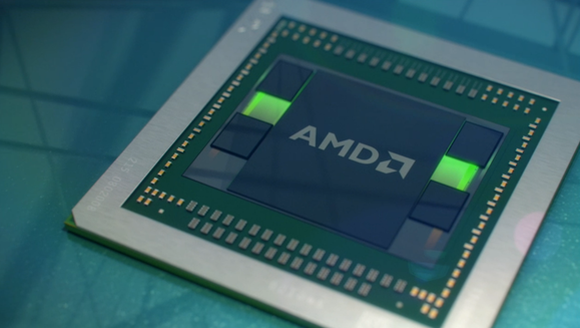

AMD’s Fiji GPU.

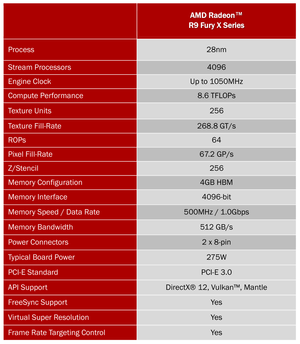

The AMD Radeon R9 Fury X’s technical specifications.

Moving past memory, AMD’s new “Fiji” GPU is nothing short of a beast, packed to the gills with a whopping 4,096 stream processors—compared to the R9 290X’s 2,816—and 8.9 billion transistors. It’s clocked at 1,050MHz, promises 8.6 teraflops of compute performance, and draws 275 watts of power through two 8-pin power connectors that can draw up to 375W. Again, our previous coverage has much more info if you’re interested.

The Radeon R9 Fury X over the hood

AMD spared no expense on the physical design of the Fury X, either. The 7.5-inch card is built from multiple pieces of die-cast aluminum, then finished with a black nickel gloss on the exoskeleton and soft-touch black everywhere else. Everything’s covered, even the sides and back of the card. There’s not even an exhaust grille on the I/O plate, which rocks a trio of full-sized DisplayPorts and an HDMI port that’s sadly limited to the HDMI 1.4a specification. The decision not to go with HDMI 2.0 limits 4K video output to 30Hz through the HDMI port, so gamers will want to stick to using the DisplayPorts.

See the “GPU Tach” LEDs just above the two 8-pin power connectors?

You’ll find an illuminated red Radeon logo on the outer edge and face of the card, along with a new “GPU Tach” (as in “tachometer”) feature that places 8 small red LEDs above the power connections. The harder you push the card, the more LEDs light up. It’s super-dumb but honestly, it thrilled me to no end watching those little LEDs flare to life when booting up a game. There’s also a small green LED next to those that illuminates when AMD’s ZeroCore technology puts the Fury X to sleep. This thing screams “premium.”

That extends to the Fury X’s cooling system. Rather than going with a typical air-cooling solution, with a fan or blower, the Fury X utilizes an integrated closed-loop liquid cooler that’s basically a more refined version of the beastly Radeon R9 295×2’s water-cooling setup. It’s a slick custom design built in conjunction with Cooler Master, rocking a 120mm fan from Nidec on the radiator. AMD says the cooler itself is rated for up to 500W of thermal capacity.

Deploying water-cooling indeed keeps the Fury X running nice and cool. Despite AMD’s claim that the fan stays more than 10 decibels quieter than the Titan X’s air-cooled blower, however, I was surprised by just how much noise it puts out. Subjectively—as I don’t have a decibel meter on hand—the Fury X’s radiator fan creates more sound than the fan on Nvidia’s reference GTX 980 Ti and AMD’s own R9 295×2, though I still wouldn’t call it loud.

The braided cables connecting the radiator to the card itself are a nice touch and far more aesthetically appealing than the R9 295×2’s plastic tubes. Be mindful of where you place the discrete radiator/fan combo, however: At 2.5 inches of total width (the same as the R9 295×2’s), they jut far enough into the case of PCWorld’s GPU testing machine to bang against our CPU’s closed-loop liquid cooling.

Final design note: You won’t be able to buy aftermarket variants of the Fury X with custom cooling or hefty overclocks applied by add-in board vendors like Asus or Sapphire. AMD says the Fury X is a reference design only, though the air-cooled Radeon R9 Fury scheduled for a July 14 release will have vendor-customized designs available.

Continue to the next page for overclocking results discussion and performance testing benchmarks.

The elephant in the room

Normally, this is where I’d leap into gaming benchmarks, but I wanted to talk about a more advanced issue first: overclocking.

With power pins capable of sucking down 100W of additional energy, a liquid-cooling solution rated for up to 500W of thermal capacity, and a redesigned AMD PowerTune/OverDrive that gives you more control over fine-tuning your card’s capabilities, the Radeon R9 Fury X seems tailor-made for hefty overclocking. Heck, AMD even touted the card’s overclockability (that’s a word, right?) at its E3 unveiling. “You’ll be able to overclock this thing like no tomorrow,” AMD CTO Joe Macri said. “This is an overclocker’s dream.”

That’s… well, that’s just not true, at least for the review sample I was given.

I was only able to push my Fury X from its 1,050MHz stock clock up to 1100MHz, a very modest bump that added a mere 1 to 2 frames per second of performance in gaming benchmarks. You can’t touch the HBM’s memory clock—AMD locked it down. And any time I tried upping the Fury X’s power limit in AMD’s PowerTune utility, even by 1 percent, instability instantly ensued.

An AMD representative told me that “We had a very limited number of OC boards.” When I asked whether there will be different variants of the Fury X, given this “OC board” talk, I was told that there will only be one SKU, and it’s the usual “silicon lottery” when it comes to your GPU’s overclocking capabilities. (Overclocking capabilities vary from individual GPU to individual GPU; another Fury X could have much more headroom than ours, for example.)

All that said, we’ve heard through the grapevine that we’re not the only ones experiencing disappointing overclocks with the Fury X, either. So if you’re considering picking up a Fury X, peruse the following gaming benchmarks knowing that you may not be able to eke out additional performance via overclocking.

AMD Radeon R9 Fury X gaming benchmarks

Enough preamble! Let’s dive into the nitty-gritty.

As with all of our graphics card reviews, I benchmarked the Radeon R9 Fury X on PCWorld’s GPU testing system, which contains:

- Intel’s Core i7-5960X with a Corsair Hydro Series H100i closed-loop water cooler, to eliminate any potential for CPU bottlenecks affecting graphical benchmarks

- An Asus X99 Deluxe motherboard

- Corsair’s Vengeance LPX DDR4 memory, Obsidian 750D full tower case, and 1200-watt AX1200i power supply

- A 480GB Intel 730 series SSD

- Windows 8.1 Pro

As far as the games go, we used the in-game benchmarks provided with each, utilizing the stock graphics settings mentioned unless otherwise noted. We focused on 4K gaming results for this review.

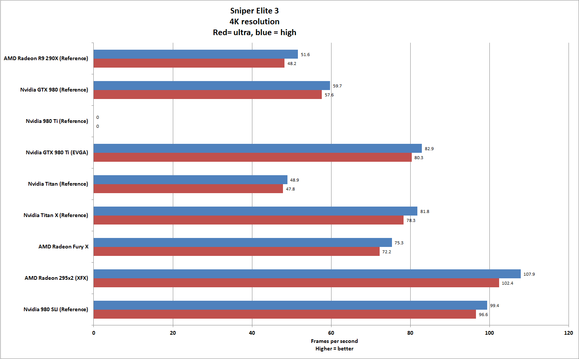

I’ve compared the Fury X against Nvidia’s reference GeForce 980 Ti, GeForce 980, and the $1000 Titan X, as well as AMD’s older Radeon R9 290X and the Radeon R9 295×2, which packs two of the “Hawaii” GPUs found in the R9 290X. I’ve also included some benchmarks from a card that we won’t have a formal review for until later this week: EVGA’s $680 GeForce GTX 980 Ti Superclocked+, an aftermarket version of the GTX 980 Ti that sports EVGA’s popular ACX 2.0+ dual-fan cooling system.

Spoiler alert: EVGA’s custom GeForce GTX 980 Ti Superclocked+ with ACX 2.0+ cooling.

EVGA sent me the GTX 980 Ti SC+ on the same day AMD passed me the Fury X—pure coincidence, I’m sure. We’ll dissect it in full detail in our review later this week, but basically, the ACX 2.0 cooler helps EVGA’s model run a full 9 degrees Celsius cooler than the 980 Ti reference design, which in turn let EVGA crank the GPU’s core clock up to 1,102MHz base, which boosts to 1,190MHz when needed. The stock GTX 980 Ti packs 1,000MHz base and 1,075MHz boost clocks, for reference.

Spoiler alert: This EVGA GeForce GTX 980 Ti Superclocked+ is a beast that outpunches both the Fury X and Titan X itself—something EVGA was no doubt aware of when it sent me the card just in time to coincide with the Fury X launch.

But remember: Even if the EVGA card is more beastly, the Fury X still kicks ass.

Housekeeping notes: You can click on any graph in this article to enlarge it. Note that only 4K results are listed here due to time constraints, but I can drop 2560×1440 resolution benchmarks for the Fury X in the comments if anybody’s interested. (With the exception of Sleeping Dogs, Dragon Age, and GTA V on ultra settings—all of which hover in the 40 to 50 fps range—the Fury X clears 70 fps in every other tested gaming benchmark at that resolution.)

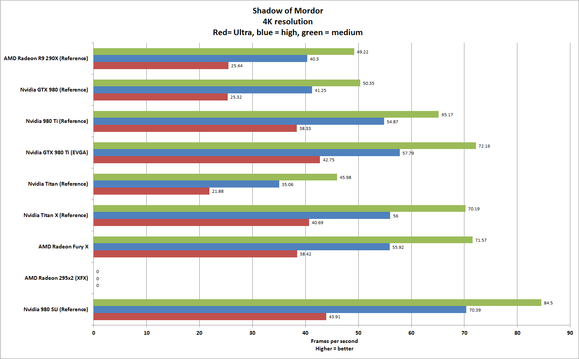

Let’s kick things off with Middle-earth: Shadow of Mordor. This nifty little game gobbled down tons of industry awards and, more importantly for our purpose, offers an optional Ultra HD textures pack that is only recommended for cards with 6GB or more of onboard memory. That doesn’t hinder the Fury X’s ability to come out swinging with slightly higher frame rates than the reference GeForce GTX 980 Ti—no small feat, especially when the game opens with a splash page championing Nvidia technology.

The game was tested at Medium and High quality graphics presets, then by using the Ultra HD Texture pack and manually cranking every graphics option to its highest available setting, which Shadow of Mordor’s Ultra setting doesn’t actually do. The R9 295×2 consistently crashes every time I attempt to change Mordor’s resolution or graphics settings, hence the zero scores. (Ah, the joys of multi-GPU setups.)

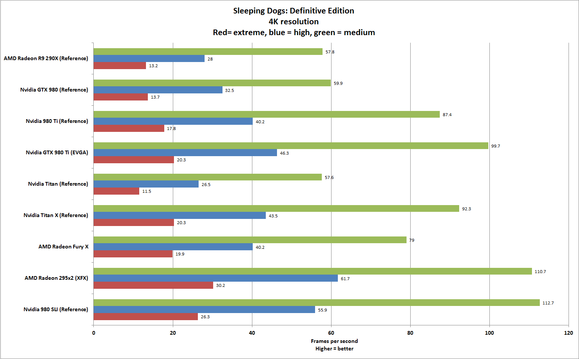

Sleeping Dogs: Definitive Edition absolutely murders graphics cards when the graphics settings are set to Extreme at high resolutions. Only the dual-GPU Radeon R9 295×2 hits 30 fps at 4K resolution, though the Fury X hangs with its Nvidia counterparts.

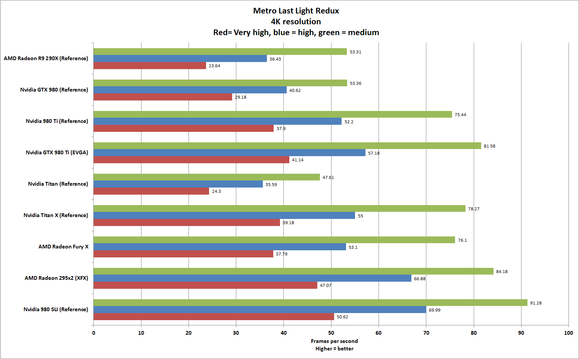

The Fury X also hangs tight with the reference GTX 980 Ti in Metro Last Light Redux, which we test with PhysX and the frame rate-killing SSAA options disabled. EVGA’s version of the GTX 980 Ti trumps all single-GPU comers, though the dual-GPU Radeon R9 295×2 fires on all cylinders in this title.

Again, the Fury X and reference 980 Ti are neck and neck in Alien Isolation, a game that scales well across all hardware types and falls under AMD’s Gaming Evolved brand.

The gorgeous Dragon Age: Inquisition also partnered with AMD at its launch, but Nvidia’s cards maintain a clear lead here. Note that the R9 295×2 apparently doesn’t have a working CrossFire profile for the game, so it drops down to using a single GPU.

The same goes for Sniper Elite 3. Note that we didn’t have a chance to test the reference GTX 980 Ti here.

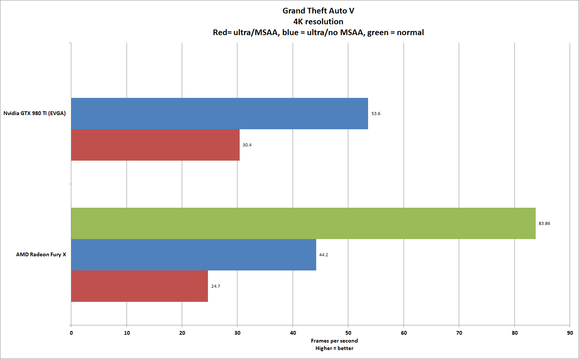

We also tested the Fury X and EVGA’s 980 Ti Superclocked+ in Grand Theft Auto V, because the game is notorious for demanding more than 4GB of memory—HBM’s top capacity—at high resolutions.

We tested the game three ways at 4K resolution. First, by cranking all the sliders and graphics settings to their highest settings, then enabling 4x MSAA and 4X reflections MSAA in order to hit , of RAM usage; then, using the same settings but disabling all MSAA to drop the memory usage to 4,029MB, just under the Fury X’s limit; and then by testing the Fury X’s chops at normal graphics settings with MSAA disabled, which consumes 1,985MB of memory. (We didn’t have time to benchmark any other cards, alas.)

The EVGA card pounds the Fury X here—no wonder GTA V wasn’t included in the reviewer’s guide benchmarks AMD provided for the Fury X last week. But the frame rate averages alone don’t show the full experience: When GTA V was pushed to consume more memory than the Fury X has onboard, the experience became extremely stuttery, choppy, and graphically glitchy as the card offloaded duties to system memory, which is far slower than HBM.

That’s to be expected when a game’s memory use exceeds the onboard capabilities of a graphics card, however, which was a big part of the reason gamers were in such a tizzy over the GTX 970’s segmented memory setup earlier this year, in which the last 0.5GB of the card’s 4GB of RAM performs much slower than the rest.

Continue to the next page for the conclusion of our Fury X performance testing, and final thoughts about AMD’s new flagship.

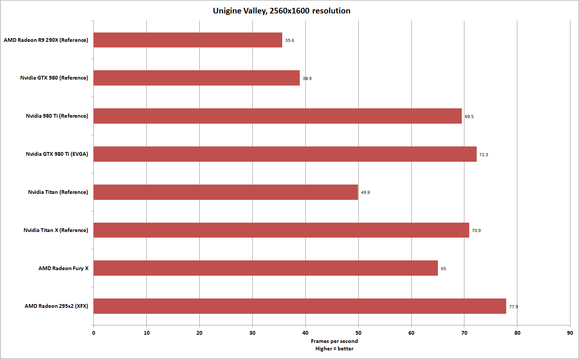

I also tested the systems using three synthetic, but well-respected benchmarking tools: 3DMark’s Fire Strike and Fire Strike Ultra, as well as Unigine’s Valley. As AMD promised, the Fury X comes out ahead of the reference GTX 980 Ti in Fire Strike and Fire Strike Ultra, beating even the EVGA variant at the former, perhaps due to HBM’s speed—though the tables are turned in the Valley results.

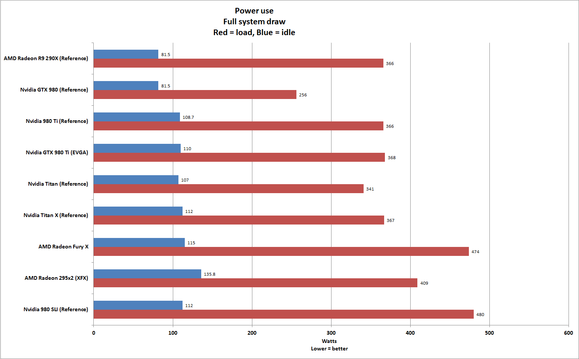

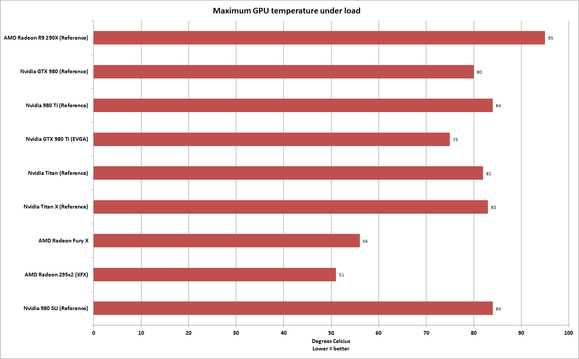

To test power and thermal information, I run the grueling Furmark benchmark for 15 minutes, taking temperature information at the end using Furmark’s built-in tool and double-checking it with SpeedFan. Power is measured on a whole-system basis, instead of the GPU alone, by plugging the PC into a Watts Up meter rather than the wall socket.

As you can see, the Fury X may technically need only 275W for what AMD calls “typical gaming scenarios” but it draws much, much more under Furmark’s worst-case scenario—nearly as much as two GTX 980s (not Tis) in SLI. It drew even more power than the dual-GPU Radeon 295×2.

On the positive side, the Fury X runs extremely cool, hitting 56 degrees Celsius max after several hours of overclocking. There would be plenty of room for overclocking… if the chip itself overclocked worth a damn.

Bottom line

So there you have it: Between the new Fiji GPU and the inclusion of HBM, AMD’s Radeon R9 Fury X enters the rarefied air of single-GPU cards capable enough to play games at 4K resolutions with high graphics detail settings enabled—an exclusive club containing only it, the GTX 980 Ti, and the Titan X. (Like the Titan X and 980 Ti, the Fury X struggles to hit a full 60fps at 4K/high, however, so if you opt to pick one up you should consider picking up a new 4K FreeSync monitor to go with it.)

One more time: The Fury X kicks ass! Both technically and aesthetically. AMD needed a hit, and the Fury X is sure to be one with Team Red enthusiasts.

That said, it’s hard not to feel a bit disappointed about some aspects of the card—though that may have to do more with AMD’s failure to manage expectations for it.

After hearing about HBM’s lofty technical numbers for months, it’s disappointing to see little to no pure gaming benefits from all that bandwidth. After seeing the tech specs and hearing AMD’s Joe Macri wax poetic about the Fury X’s overclocking potential, it’s majorly disappointing to see it fail so hard on that front, crappy silicon lottery draw or no. And while 6GB of RAM is still overkill for the vast majority of today’s games, it’s disappointing to see the Fury X limited to just 4GB of capacity when some of today’s games are starting to blow through that at the 4K resolution that AMD’s new flagship is designed for, as evidenced by our GTA V results.

The timely arrival of EVGA’s custom GTX 980 Ti, which beats both AMD and Nvidia’s reference flagships in raw benchmarks, also takes some of the wind out of the Fury X’s sails—wind that can’t be countered by AMD’s own hardware partners, because the Fury X is limited to reference designs alone.

No, the Fury X isn’t Titan-killer that Team Red fans hoped it would be—but it is a GTX 980 Ti equal. This is nothing short of a powerful, thoughtful graphics card that once again puts AMD Radeon on equal footingwith Nvidia’s gaming finest. Being one of the most powerful graphics cards ever created is nothing to sneeze at, especially when AMD wrapped it all up in such a lovingly designed package.

AMD’s Radeon R9 Fury X kicks ass… even if it doesn’t make Nvidia’s high-end offerings obsolete.