Austin, Texas— “This is the… largest chip project endeavor in the history of humanity, with a budget of several billion dollars,” Nvidia CEO Jen-Hsun Huang said proudly onstage, a smile on his face. “I’m pretty sure you can go to Mars [for that budget].” And the result? Nvidia says its new GeForce GTX 1080 is far faster than the Titan X, the fastest single-GPU graphics card in the world today—and even faster than two GTX 980s in SLI.

And with that “irresponsible level of performance,” the next-generation graphics card wars are officially on. Hot damn.

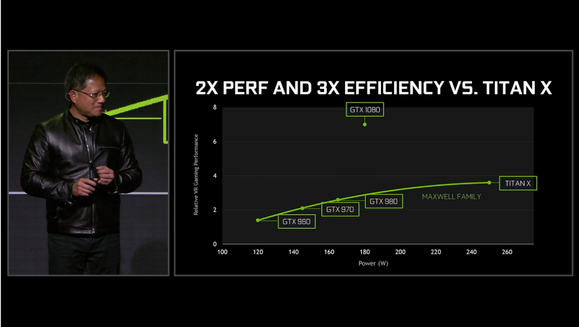

That sort of performance and efficiency drives home the graphical glories enabled by the long-awaited leap to 16-nanometer process technology in GPU transistors. After four long years of being stuck on the 28nm transistor process node, both of the big graphical powerhouses in PC gaming are finally transitioning to GPU architectures with smaller transistors—Nvidia with the Pascal GPU in the GTX 1080 and GTX 1070, and AMD with Polaris, which is built on a 14nm process. These advances should bring greater power efficiency and performance to both vendors’ graphics cards.

AMD’s early Polaris demos showed off its power-efficiency advantages—like running Star Wars Battlefront at 1080p at just 86 watts of full system power, compared to 140 watts on an otherwise identical system running Nvidia’s GTX 950. Team Green opted to come out with the big guns blazing. Again: The GeForce GTX 1080 will be faster than two GTX 980s in SLI, according to Huang. And the GTX 1070 will out-punch a Titan X for roughly a third of the Titan X’s price. (It’s important to note, however, that Nvidia didn’t reveal the resolutions these benchmarks were run.)

Brad Chacos

Brad Chacos The GeForce GTX 1080 features 8GB of RAM, HDMI 2.0B and DisplayPort 1.4 and twice the performance of Nvidia’s fastest GPU. And get this: It’s all powered by a single 8-pin power connector.

Hot. Damn.

The GeForce GTX 1080 is Nvidia’s first consumer graphics cards built around the 16-nanometer manufacturing process—a technological leap bolstered by the addition of FinFET technology as first revealed in the monstrous Tesla P100 in April.

Unlike the Tesla P100, the GeForce GTX 1080 skips the revolutionary high-bandwidth memory first revealed in AMD’s Fury lineup. Instead, it features 8GB of Micron’s new GDDR5X technology—essentially a more advanced version of the GDDR5 memory used in graphics cards for years now—clocked at a whopping 10Gbps. Before you shake your head in disappointment, the first-gen HBM available today is limited to 4GB in capacity, and second-gen HBM2 (and its higher capacities) isn’t expected to land until the beginning of 2017. Even AMD’s next HBM-equipped graphics cards won’t launch until 2017.

Huang didn’t reveal many technical details, but Epic’s Tim Sweeney took the stage to run a demo of the studio’s upcoming MOBA Paragon. At the end of the demo, Huang called for a overlay of the system’s specifications to be pulled up. The GeForce GTX 1080 was clocked at a whopping 2,114MHz—one of the highest GPU clock speeds ever recorded, Huang said—yet it remained an icy-cool 67 degrees Celsius. “Its overclockability is just incredible,” Huang boasted.

Hot. Damn.

The move to a 16nm process gives Nvidia’s newest GPU an insane jump in performance and efficiency.

Even better: Nvidia’s new cards are coming soon and won’t break the bank, especially when you compare their performance to the GTX 900-series. The GTX 1080 will cost $600, or $700 for an Nvidia designed edition, on May 27. The GTX 1070, meanwhile, will cost $380—or $450 for an Nvidia edition—on June 10. Both feature a redesigned shroud that largely mimics the same aesthetic stylings of the GTX 700-series and 900-series reference cards, but with a much more angular, aggressive look that brings the chunky polygons of early 3D video games to mind.

So what are you supposed to do with all that firepower, besides play games wonderfully?

Nvidia showcased a fancy new technology called “simultaneous multi-projection” that puts Pascal’s oomph to good work. Simultaneous multi-projection improves how game looks on multiple displays, while also significantly boosting your game’s frame rate. Traditionally, when you’re using a multi-display setup, the GPU still projects a flat image across all three screens, which creates distortion in the image. Think of folding a paper with a picture on it—rather than looking at a straight line across it, it looks like two slightly bent lines. Simultaneous multi-projection dynamically adjusts the image to look natural across your screens, so those straight lines appear straight yet again.

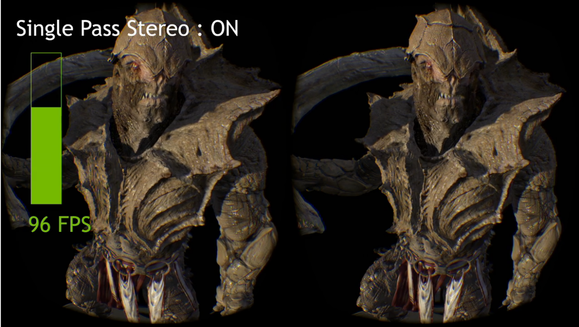

It sounds silly, but it could potentially fix a problem point in PC gaming—and it works across a whopping 16 “viewpoints,” be they traditional displays or VR eyepieces. To create the experience, Nvidia’s GPUs pre-distort the image in a manner similar to Nvidia VRWorks’ multi-resolution shading, and only renders the central area you’re looking at in full resolution. That lets the system create higher framerates by focusing on what’s important—in one demo, the frame rate jumped from roughly 60fps to 96fps with simultaneous multi-projection enabled.

I talked with developers from Cyan, who were showcasing simultaneous multi-projection in Myst spiritual successor Obduction. They’ve observed up to a 30-percent leap in frame rates in their game with the technology enabled. Even on a single display, the distortions on the edges of the screen have to be searched for to be noticed.

The GeForce GTX 1080’s support for simultaneous multi-projection means far more performance in VR games than before.

But that wasn’t all.

Huang also revealed some snazzy new software tricks, starting with Ansel, which Huang touted as the “world’s first in-game 3D camera system” while mocking the traditional method of pressing Print screen or F12 in Steam to capture your gaming glories. Ansel—which Huang says was inspired by digital game artists like Duncan “Dead End Thrills” Harris—is an SDK that plugs into games and is built directly into Nvidia’s drivers.

Ansel lets you do far more than simply capture a still image of a scene. The SDK supports free-floating cameras, various vignettes and filters, and support for custom resolutions up to 32 times higher than standard monitors. In a demo, resolutions up to 61,000 pixels wide were shown—and perhaps most interestingly, 360-degree image support, which lets you explore wrap-around scenes you captured in a VR headset like HTC Vive or even a Google Cardboard headset.

Nvidia’s Ansel will give you the ability to take a Kodak moment in a video game.

Look for support for Ansel to land in games like Witcher 3, Lawbreakers, Paragon, and The Division at some point in the future. It’s kind of a bummer that (it appears) developers have to explicitly build support into their games for the technology, but what a cool, exciting technology it is.

Nvidia also revealed “VRWorks Audio,” a new addition to the Gameworks/VRWorks toolset that uses fancy software tricks to deliver true positional audio in virtual reality games. We won’t get into the details, but it should make VR games even more immersive—and you can try it yourself in a new VR app coming out dubbed Nvidia Funhouse, which is essentially a series of carnival games that showcase Nvidia’s VRWorks technology.

Brad Chacos

Brad Chacos Imagine how fast two of these must be.

All impressive stuff indeed. It’s your move, AMD—I suspect we’ll hear more about Polaris-based graphics cards sooner than later.

Editor’s note: This article was updated with additional details.