Pros

- Fantastic multi-core performance and comparable single-threaded performance

- Competes with far pricier CPUs

- Native PCIe 4.0 support

Cons

- PCIe 4 only when paired with newest motherboard chipset

- Motherboards slightly more expensive than before

- Slightly trails Intel in gaming performance

Best Prices Today: AMD Ryzen 9 3900X

Update: We’ve since added 3D viewport and Synegy’s Cinescore performance results and have updated our gaming benchmarks to include scores for the older Ryzen chip in Far Cry 5 and Deus Ex: Mankind United.

Our review of AMD’s 12-core Ryzen 9 3900X CPU, in five words:

Damn, this CPU is fast.

But keep reading, because the Ryzen 9 3900X is likely as significant, and likely as game-changing, as AMD’s original K7 Athlon-series of CPUs that crossed the 1GHz line first, or its Athlon 64 CPU that ushered in 64-bit computing in a desktop PC.

You’d think the Ryzen 9 3900X would have a hard time achieving the same greatness. It’s true that it doesn’t quite shake all the gaming-performance bugaboos of past generations. But we think when the dust settles, the CPU series will easily be a first-ballot, CPU hall of fame entry.

AMD

AMD

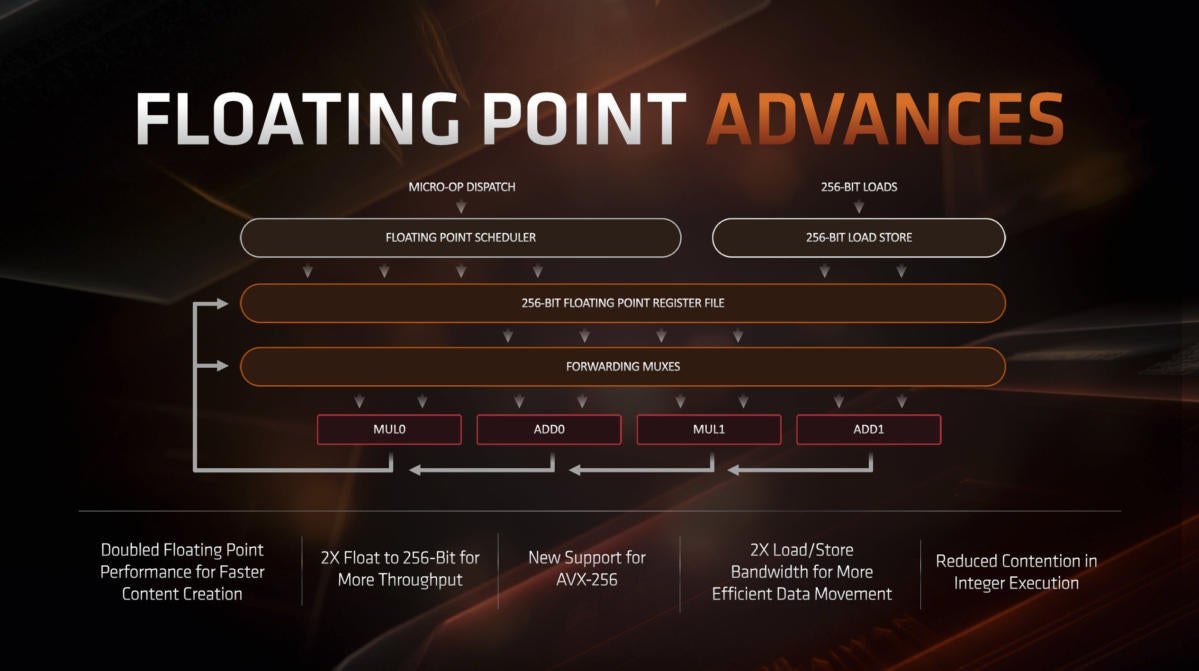

AMD said it has improved its floating point performance by 2X on its Ryzen 3000 series of CPUs.

It is, after all, the first consumer x86 chip to be produced on a 7nm process node. Intel’s current desktop chips are still all built on a 14nm process node, and the company will just begin to move to 10nm later this year. We suspect the chip giant is a little envious that AMD reached this tiny die shrink first.

With that production technology lead, AMD breaks out a redesigned 2nd generation “Zen” core for the Ryzen 3000 that promises double the floating point performance over the previous Ryzen 2000 series, as well as a 15-percent increase in “instructions per clock” (think overall efficiency per clock).

On an even deeper level, AMD said it has improved instruction pre-fetching, further enhanced the instruction cache and doubled the micro-op cache. Besides doubling the floating point performance, AMD has now adopted AVX-256 (256-bit Advanced Vector Extensions) (and yes, Intel fans, we know Core has AVX-512). AVX’s impact is mostly seen in video encoding today, but it can rear its performance head elsewhere too.

AMD has essentially doubled the L3 cache on the Ryzen 3000 chips, and the company is going for some Apple-esque marketing by calling it Game Cache. The cache, up to 70MB on the Ryzen 9 3900X, goes a long way toward reducing memory latency on the Ryzen 3000s. It also tends to boost gaming performance dramatically on the CPU, so AMD feels calling it Game Cache can help the average consumer understand its benefits. Yes, that larger L3—err, Game Cache will also help application performance, but no one gets excited about App Cache we guess.

AMD

AMD

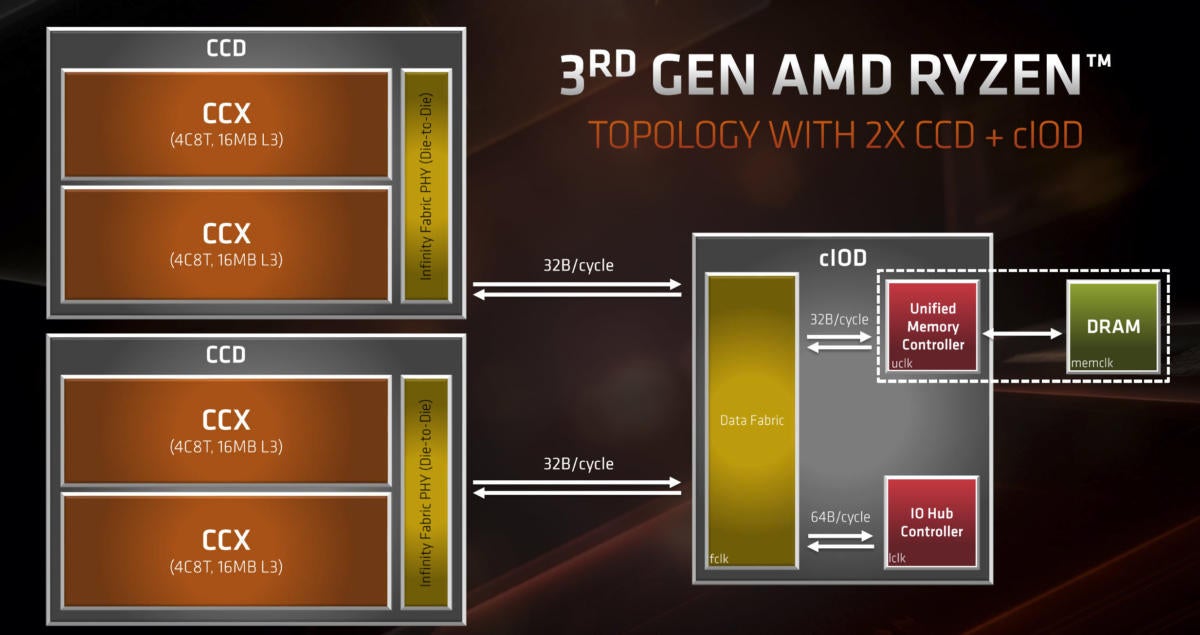

The Ryzen 3000 features two 7nm CCDs, which feed into an IO die that has the memory controller and PCIe controller.

Besides the cores, AMD has also significantly rejiggered its chiplet design. While the original Zen-based Ryzens featured two 14nm CCDs along with their memory and PCIe controller joined with Infinity Fabric, the Zen 2-based Ryzen 3000s actually separate the memory controller and PCIe 4.0 controller on separate IO dies. Unlike the 7nm compute cores, the IO die is built on a 12nm process. This helps lower the overall cost of the CPU because it saves AMD’s fab partner, TSMC, from using even more valuable 7nm process wafers to build the IO dies.

The thousand-dollar question is whether gaming—which has dogged Ryzen performance from Day One—has finally been erased in situations where the GPU is not the limiting factor. We can say from what we’ve seen that Ryzen 3000 doesn’t quite win all of the time, but it’s so close now, even with Nvidia’s brutally fast RTX 2080 Ti driving it, that it just won’t matter 99 percent of the time.

PCIe 4.0?!

Yes, we said PCIe 4.0, which is the next iteration of PCIe. PCIe 4.0 essentially doubles the clock speed and throughput over PCIe 3.0. AMD’s move to PCIe 4.0 is another feather in its cap, as Intel continues to stick with PCIe 3.0 speeds on its CPUs. Nvidia, likewise, “only” has PCIe 3.0-based GPUs.

While the actual performance of PCIe 4.0 outside of SSDs today won’t be easily realized, the new standard does help free up more lanes and more ports elsewhere in the PC. If you want to take advantage of PCIe 4.0 SSDs, AMD’s Ryzen 3000 and the new X570 chipset is the only game in town.

You can read all about PCIe 4.0 in this explainer. If you’re confused about the simultaneous presence of PCIe 5.0 and PCIe 6.0 in earlier stages of development, remember that it takes time to go from initial spec to actual hardware. PCIe 4.0 is basically the only answer today and a decent bragging point for AMD.

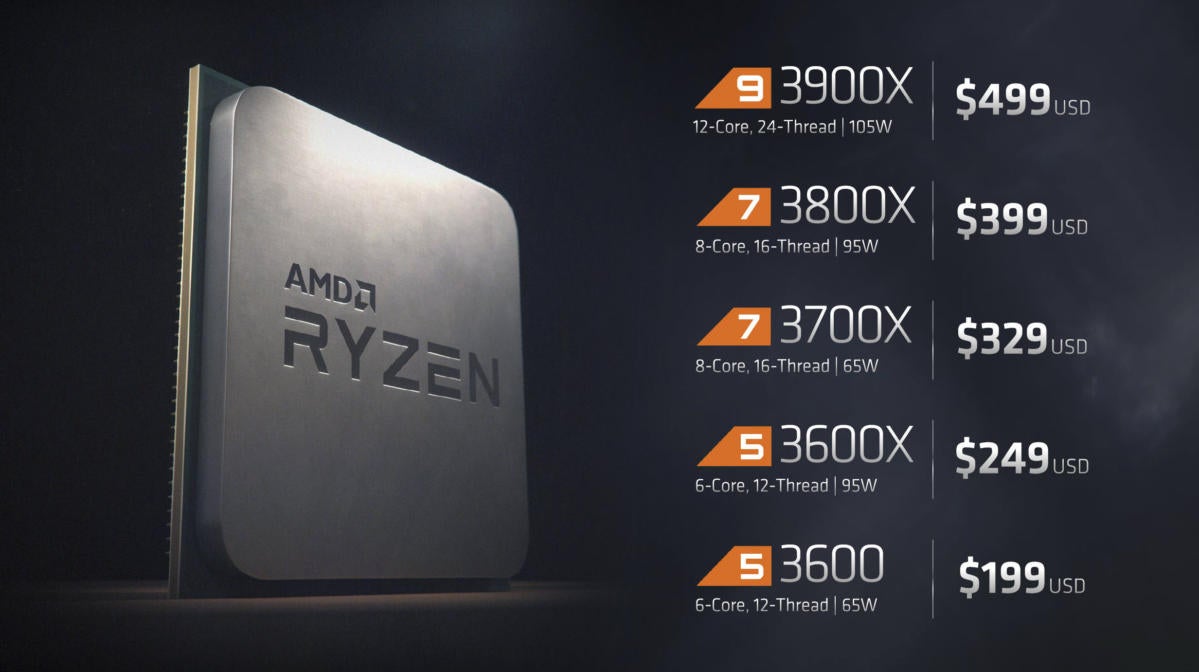

Boatloads of value

And yes, there’s still value. While Intel charges $488 for its flagship 8-core Core i9-9900K, AMD will give you 12 cores it claims are just as fast, if not faster, for $499, with a bundled RGB cooler too.

AMD

AMD

AMD’s lineup of Ryzen 3000 seems poised to push Intel’s entire lineup off the field of battle.

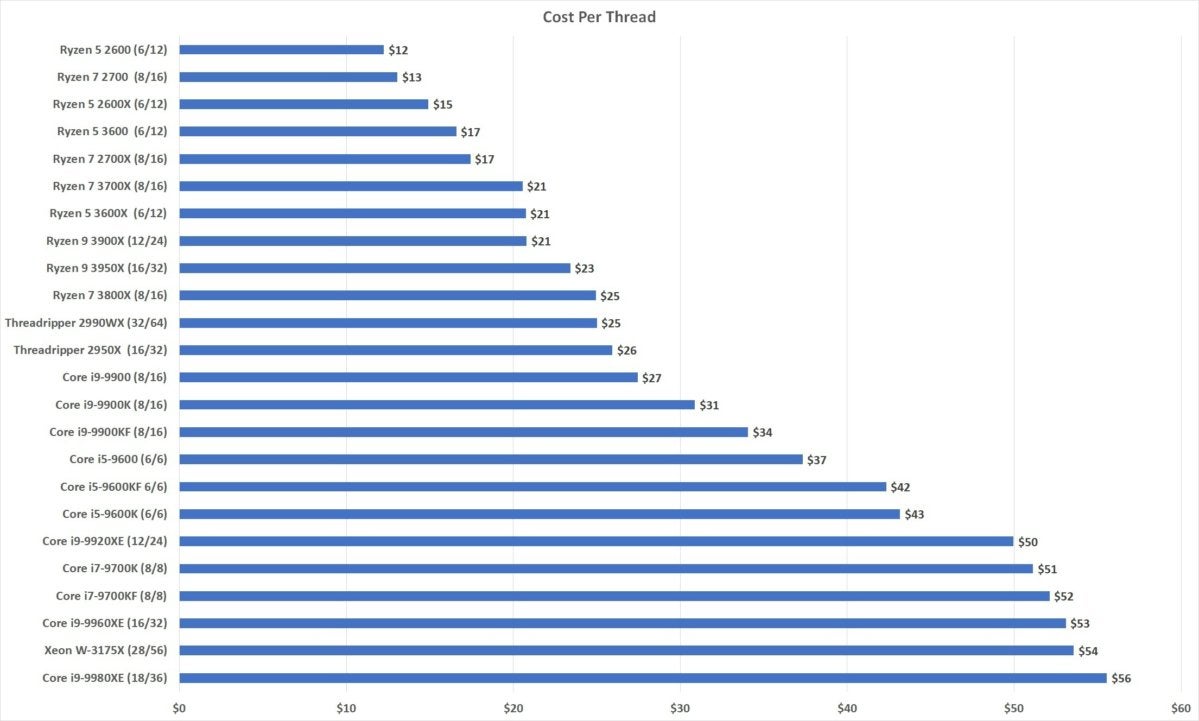

How much value? To find out just how much buck per thread you’re getting, we mapped out the CPUs based on how much you each thread costs. As you can see, AMD owns Intel in this category. At $21 per thread for the Ryzen 9 3900X, the $31 for the Core i9-9900K isn’t even in the same ballpark.

IDG

IDGNone of the cost-per-thread or fancy 7nm process matters, though, if the performance isn’t there. Let’s get on with what you came here for: to find out how fast the Ryzen 9 3900X is.

How we tested

For this review we decided to focus in on three key CPUs. First we use AMD’s 2nd-generation Ryzen 7 2700X as a baseline. Second we bring in the main competitor at $488: Intel’s mighty Core i9-9900K. The last chip is none other than AMD’s $499 Ryzen 9 3900X.

We tested each CPU in parallel, with the Ryzen 7 2700X mounted in an MSI X470 Gaming M7 AC, the Core i9-9900K into an Asus Maximus XI Hero, and the Ryzen 9 3900X into an MSI X570 Godlike.

For graphics, the initial CPU and some game testing were performed with Founders Edition GeForce GTX 1080 cards. We ran additional game testing with Founders Edition GeForce RTX 2080 Ti cards.

All three builds used the latest available UEFI/BIOS and drivers and fresh installs of Windows 10 Professional 1903. This version of Windows is particularly important, because AMD says 1903 now includes scheduler optimizations that help it dispatch threads in more efficient manner on Ryzen 3000.

Remember: AMD’s CPUs are constructed with small cities of CPU cores and high-speed, but slower access between the cities of CPU cores. In older versions of Windows, the scheduler would dispatch one thread to a city in one cluster. Because it was never designed for multi-die designs, it would send a second thread to a different cluster of CPU cores. This would sap some performance, as the threads would then have to deal with crossing between the two clusters of cores instead of simply dispatching both threads to the same cluster of CPU cores. That’s now fixed, and Windows 1903 will send threads the same cluster of CPU cores when possible. This, AMD claims, can yield up to a 15-percent boost. Officials also say it’s not uniform in all applications, however, so your mileage will vary.

Gordon Mah Ung

Gordon Mah Ung

AMD’s new 12-core Ryzen 9 3900X is the new leader among mainstream performance CPUs.

While we used the same amount of DDR4 in dual-channel modes in all three builds, we did vary one aspect by running the Core i9-9900K and Ryzen 7 2700X with 16GB of DDR4/3200 CL14, and the Ryzen 9 3900X with 16GB of DDR4/3600 CL15. We wanted to test the Ryzen 9 with its optimal memory clock, which is 3,600MHz. We intend to test it at 3,200MHz as well. Due to time constraints, we’re initially showing only DDR4/3600 performance and will update it with DDR4/3200 once time permits. We are told by AMD, however, that DDR4/3200 CL14 should yield roughly single-digit performance differences compared to DDR4/3600 CL15.

The other variable here is storage. For the Ryzen 7 and Core i9, both were tested with very fast MLC-based Samsung 960 Pro 512GB SSDs at PCIe 3 Gen 3 speeds. The Ryzen 9 3900X, however, is the first CPU and platform we’ve seen to support PCIe 4.0. Because it is a key feature of the new platform, we used a 2TB Corsair MP600 PCIe 4.0 SSD plugged directly into the CPU’s PCIe lanes. For the tests we’re running, storage should not impact CPU performance.

Gordon Mah Ung

Gordon Mah Ung

Corsair’s MP600 supports PCIe 4 on AMD’s new Ryzen 3000 CPUs.

To MCE or not?

For this review, as with our original Core i9-9900K review, we were torn as to whether to enable the “multi-core enhancement” feature. MCE is a motherboard-enabled feature that runs Intel “K” CPUs at higher clock speeds, while using more power and producing more heat. What offends some is that MCE is technically a violation of the letter of Intel’s law and considered an “overclock.”

You’d think that makes turning it off an easy decision. The problem is, just about every mid- to high-end Intel motherboard implements MCE set to auto out of the box. That means that any reviews of these new CPUs with the feature explicitly set to off, is not quite an honest portrayal of the true nature of the Core i9-9900K’s speed that most consumers will experience.

Leaving it on gets even stickier, because every motherboard maker implements it slightly differently. There’s no easy way to draw an exact bead on performance with MCE on.

In the end, our tests are all run with MCE off on the Intel CPU, and AMD’s somewhat similar Precision Boost Overdrive turned off as well. We’ll explore this in greater depth in another story, but for now note that running with MCE off tends to hurt Intel CPUs more than running with PBO off on AMD CPUs.

Got all that? Then keep reading to see charts, charts, and more performance charts.

Ryzen 9 3900X 3D Modeling Performance

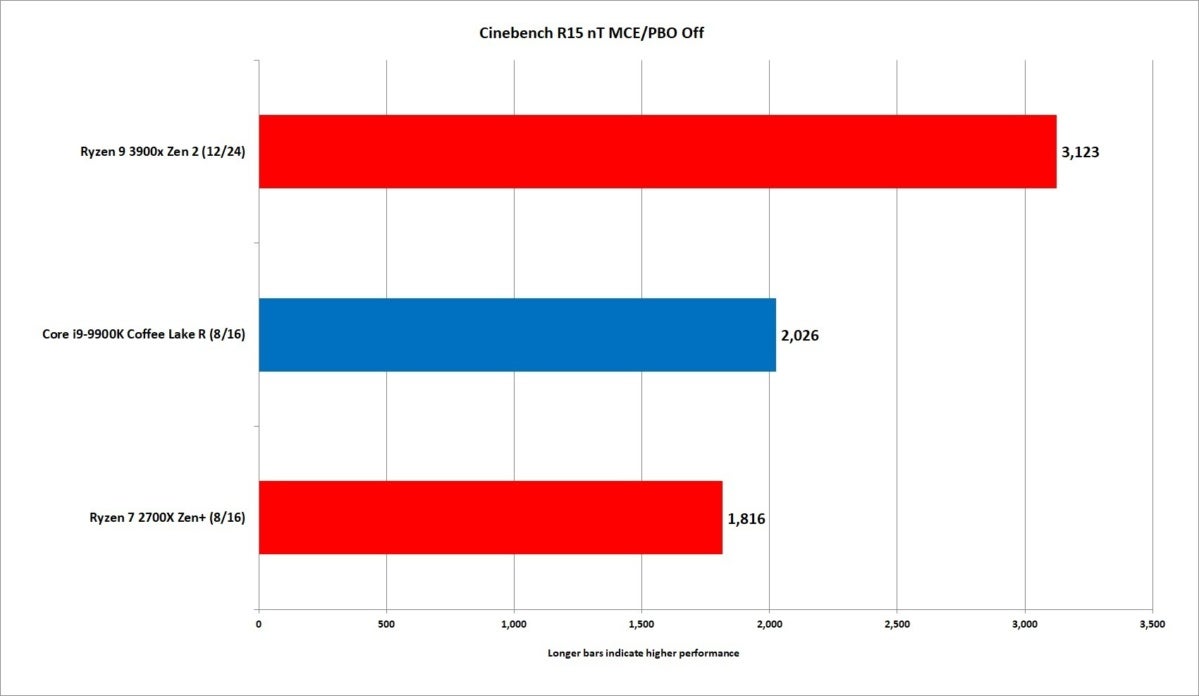

Up first is the old standby of Maxon’s Cinebench R15. This benchmark is built on the same engine used in Maxon’s Cinema 4D modeling and animation application. Cinema 4D is also built into Adobe’s Premiere and After Effects applications.

IDG

IDG

No surprise: 12 cores easily outguns 8 cores, making the Ryzen 9 3900X the easy winner here.

In the no-surprise category, we see the 12-core Ryzen 9 3900X simply demolish the 8-core Core i9-9900K to the tune of 42 percent. With 40 percent more threads at its disposal, we kinda expected this. Still, this is impressive performance and to be lauded.

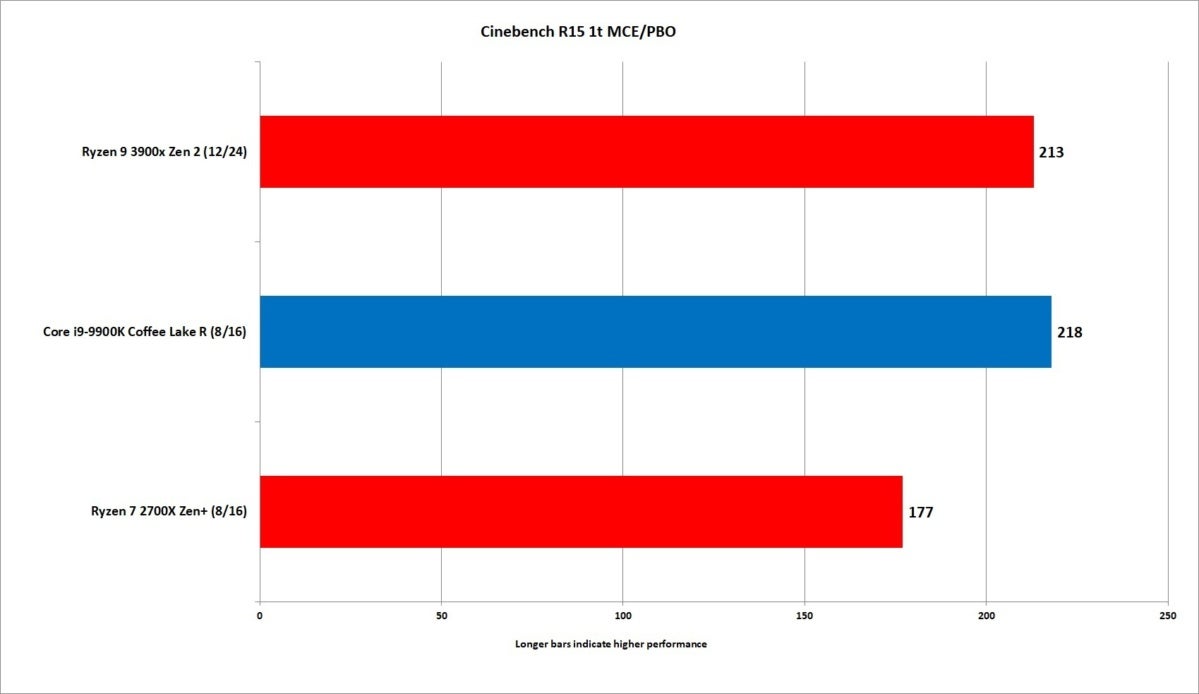

Perhaps more important is the single-threaded performance of the Ryzen 9 3900X. With a boost clock of 4.6GHz on the Ryzen 9 3900X vs. the boost clock of 5GHz on the Core i9-9900K that gives the Intel part about an 8 percent clock advantage in pure clock speed.

IDG

IDG

That’s impressive single-threaded performance for the Ryzen 9 3900X.

Not all megahertz are the same, though. With AMD’s much-improved instructions per clock—essentially how efficient the chip is—the Ryzen 9 is but 2 to 3 percent slower than the Core i9.

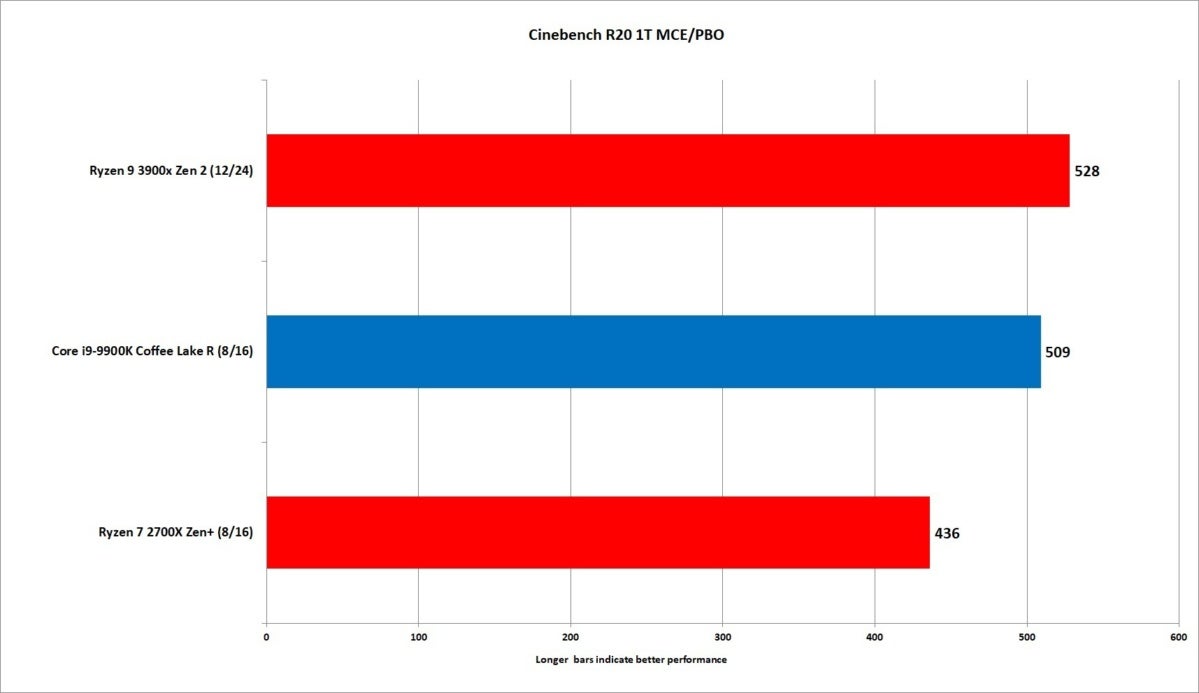

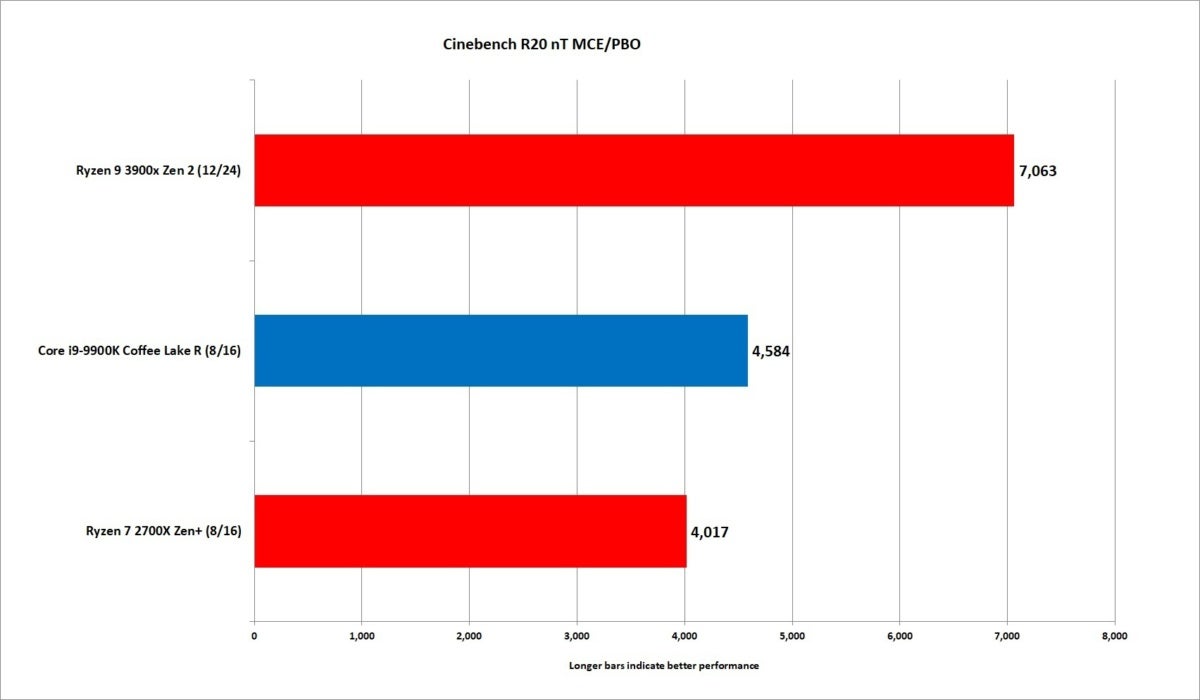

Cinebench R15, however, is fairly old, having come out in 2013, so we also measured all three CPUs using the new Cinebench R20. Intel generally is a bit faster in this updated test—against older Zen+ cores.

IDG

IDG

Shifting to the updated Cinebench R20, the Ryzen 9 3900X actually pulls ahead in single-threaded performance.

The situation changes for the Zen 2 cores in the Ryzen 9 3900X, resulting in a 3-percent bump in single-threaded performance. That’s a nice shift for AMD.

The tables turn for multi-threaded performance. The 12-core AMD part smashes the 8-core Intel part by 42 percent.

IDG

IDG

No surprise: The Ryzen 9 3900X essential runs the Core i9 off the field in multi-threaded performance.

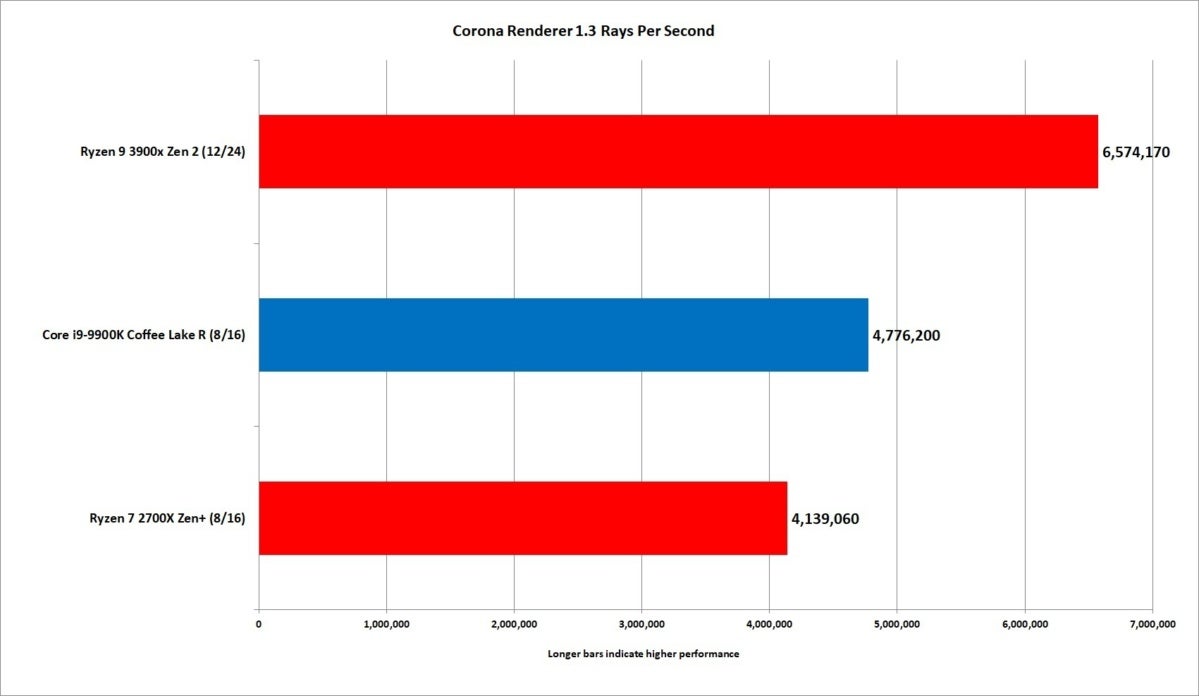

We also tested the chips using Chaos Group’s Corona Renderer benchmark. Corona is a “modern unbiased photorealistic renderer,” which refers to the precision in which it renders the scene—not unbiased based on the hardware it’s run on.

IDG

IDG

The Corona modeler likes 12 cores more than 8 cores.

In this multi-threaded test, we see the Ryzen 9 outrun the Core i9 by about 32 percent. And yup, the body blows keep coming.

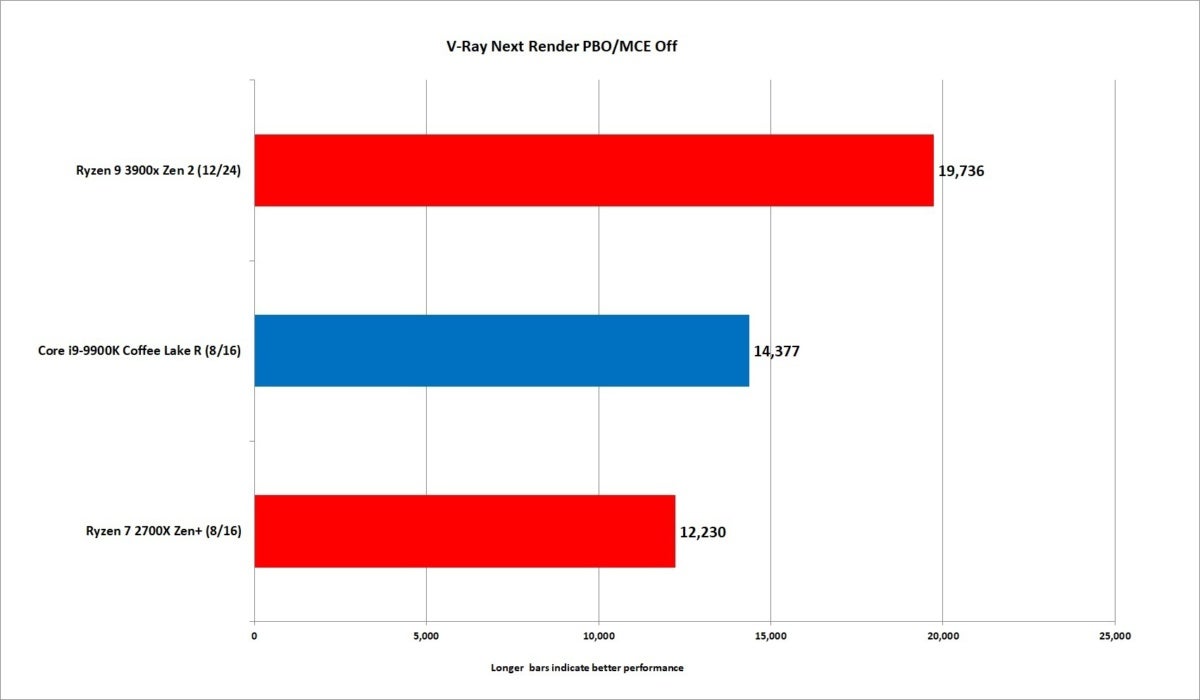

We run V-Ray Next, which is another renderer from the Chaos Group. The result? About 31 percent in favor of the Ryzen 9 over the Core i9.

IDG

IDG

Bored yet? V-Ray Next reinforces what we’re seeing in other modeling apps.

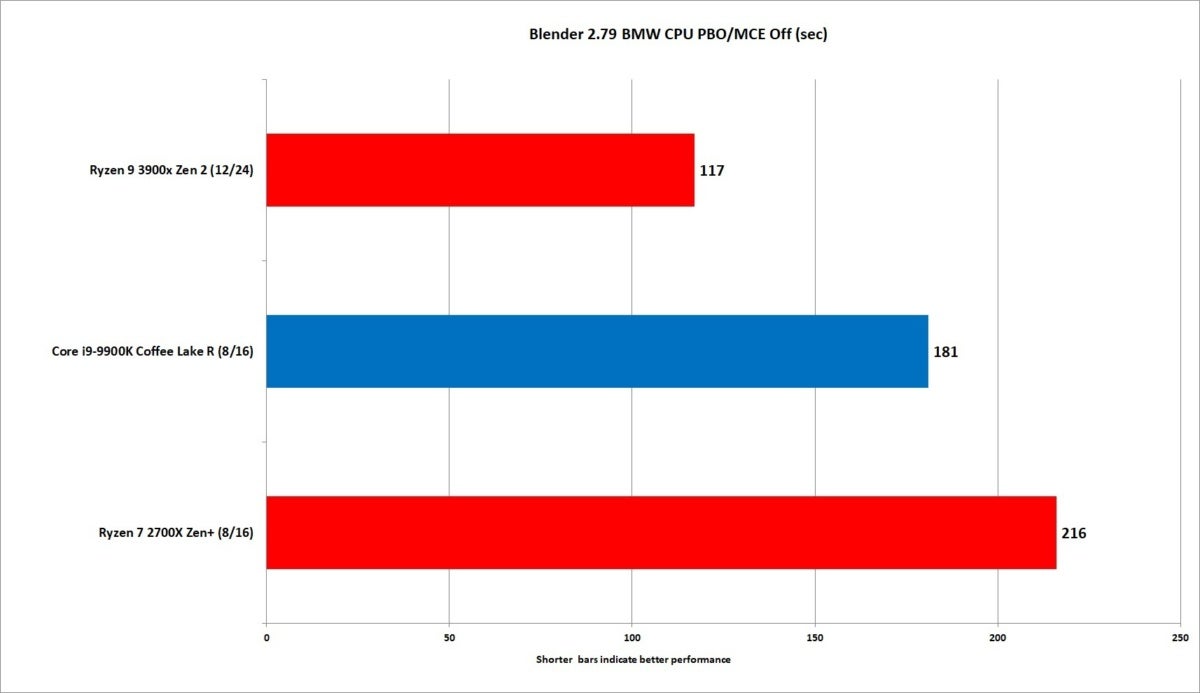

What about the free and popular 3D modeler Blender 2.80, using the intensive Gooseberry test file? How about 43 percent in favor of the Ryzen 9 over the Core i9.

IDG

IDG

Dayum. The Ryzen 9 3900X is fast.

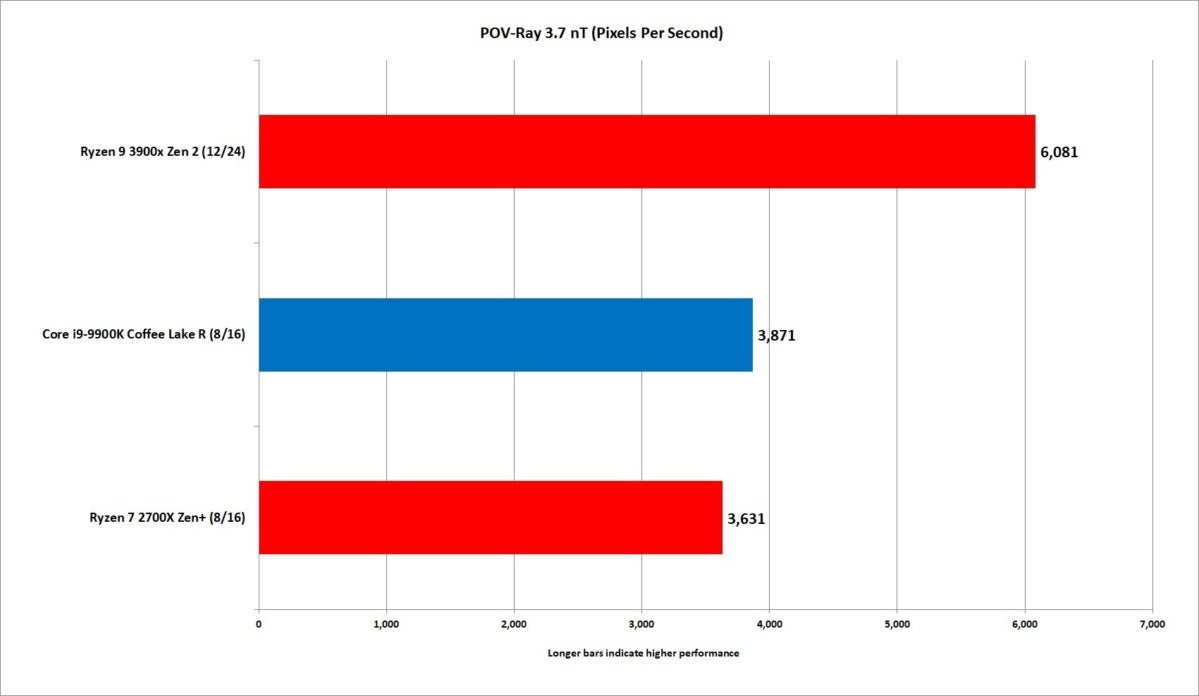

We’ll close off the 3D tasks with the oldie, but goodie POV-Ray 3.7 benchmark. The Persistence of Vision Raytracer is an open-source, free tool that has roots in the Amiga platform. Using the application’s built-in test, we saw the Ryzen 9 about 44 percent faster than the Core i9 in multi-threaded mode.

IDG

IDG

What a shocker: The Ryzen 9 mops the floor with the Core i9.

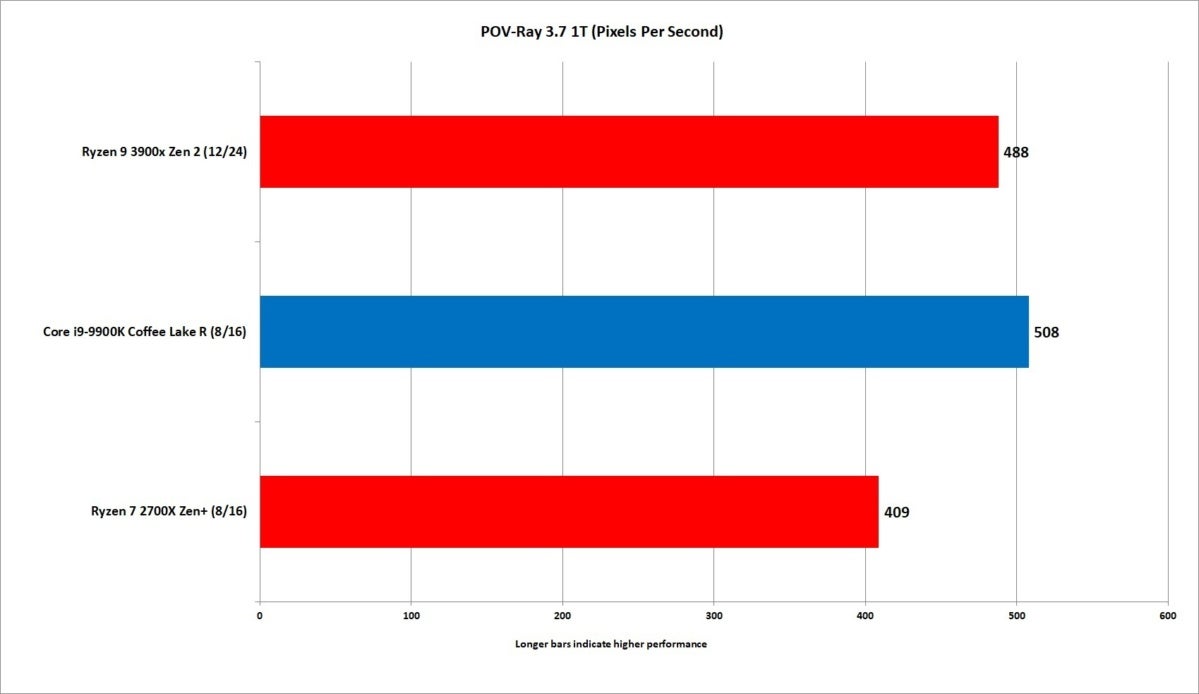

POV-Ray also has a single-threaded benchmark, in which the Core i9 and its 5GHz clock ekes out a 4-percent win over the 4.6GHz Ryzen 9. A win is a win, but most are likely to say big whoop.

IDG

IDG

The 5GHz boost clock of the Core i9 gives it a very slight edge over the Ryzen 9 in POV-Ray when set to test a single thread.

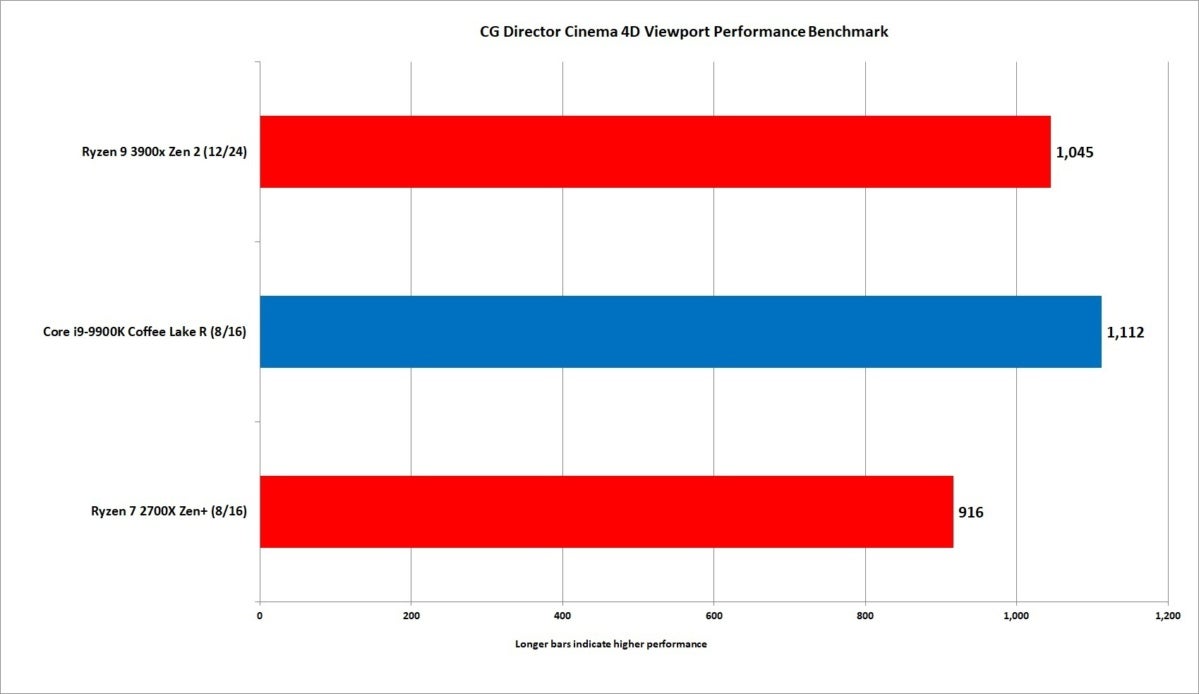

Viewport Performance

Before we jump to the next section we did want to explore a protest we had heard from Intel fans. We’ll summarize it as, “we know the simple math of 12 > 8 is true, but for 3D artists, the time waiting for rendering is not as critical as the seat time when you actively need faster responsiveness.” That is, system “snappiness” when trying to do precise modelling is just as important if not more important. In that world, the math of 5GHz > 4.6GHz, so Intel wins in that department.

To test that theory we looked to CGDirector.com’s free Cinema4D Viewport Performance benchmark. CGDirector is an PC enthusiast site for 3D content creators, video editors, and other users who work in graphics-intensive applications.

For a better interface or “viewport” experience for 3D artists, CGDirector said the CPU is the chief bottleneck—and not the GPU. That means chips with higher frequency and higher IPC typically are more important.

“In this Cinema 4D Viewport Benchmark, we measure the FPS of a typical Scene that uses common 3D Objects from Cinema 4D Objects in a hierarchy,” the website said.

For this test, we ran the Cinema 4D Viewport benchmark with a demo version of Cinema 4D R20.

Some of the results the site has posted register a 7th-gen Kaby Lake Core i7-7700K with a boost clock of 4.5GHz at 1,049, and a 16-core Skylake X Core i9-9960X with a boost clock of 4.4GHz at 1,045. A Xeon X5450 with a relatively low boost clock of 3GHz scores 418 in the test.

IDG

IDG

Using CGDirector’s Viewport Performance test, the Core i9 has the win but the Ryzen 9 isn’t too far behind is it?

Because this test is new to us, we’re not putting too much weight on it yet. Based on its results, however, the Core i9 does win. Assuming some truth to the theory that a 3D artists need UI responsiveness more than shorter rendering wait times, Intel has the edge.

It’s worth noting that even in a loss, Ryzen 9 3000 is not that far behind. And frankly, if you’re looking for the best of both worlds, with slightly slower Viewport performance but much, much greater rendering performance, the Ryzen 9 comes out in front. It’s really up to the individual tastes of the artist.

Keep reading for content creation and other tests.

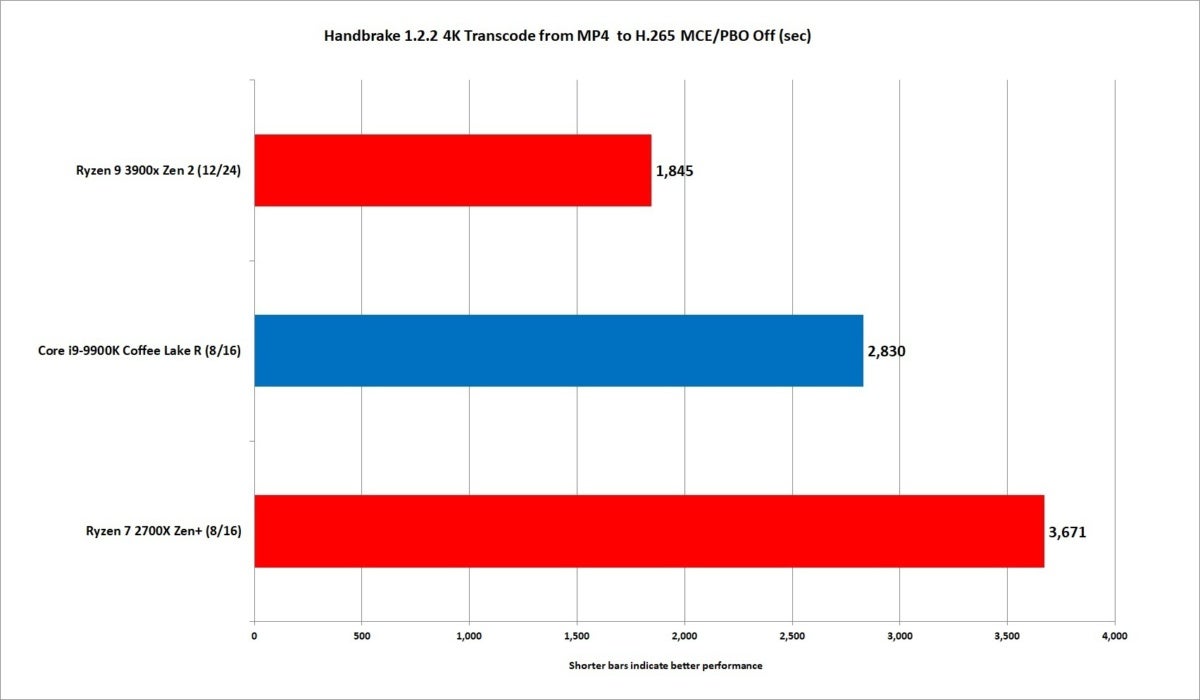

Ryzen 9 3900X encoding performance

These next tests are for those who edit or transcode video. First we use the latest version (1.2.2) of the free and popular HandBrake app to convert a UHD 4K video that was original encoded in MPEG4 in a .MOV container using the H.265 preset at the same resolution. The original file is about 6.3GB, in size which compresses down to about 600MB. Handbrake loves CPU cores and threads, and it typically scales nicely as you up the core count.

The Ryzen 9 3900X finishes an impressive 43 percent ahead of the Core i9-9900K on our conversion. Even on the Ryzen 9 3900X, it’s a beefy 30 minutes to finish the conversion.

IDG

IDG

The Ryzen 9 3900X easily wallops the Core i9 when doing a 4K encode using the H.265 codec.

It’s another big, big win for the Ryzen 9 3900X for CPU-based encoding. But we’d be remiss if we didn’t also mention that the one ace the Core i9-9900K has up its sleeve is QuickSync encoding. Rather than using the general-purpose CPU processors to convert the video, the Core i9 has a built-in GPU with fixed function processors that do only one thing, but do it stupidly fast.

Convert the video on the eight CPU cores of the Core i9, and you’re looking at a 47-minute wait. Convert it on the 12-CPU cores of the Ryzen 9 and you’re looking at about 30 minutes—just enough for a short lunch. But use QuickSync’s H.265 fixed function, and you’re looking at 4 minutes. Just barely enough time to grab a cup of joe.

Not all features or profiles in HandBrake use QuickSync, but when it does—damn.

In the end though, when you’re doing a CPU-based encode, Ryzen 9 wins hands down.

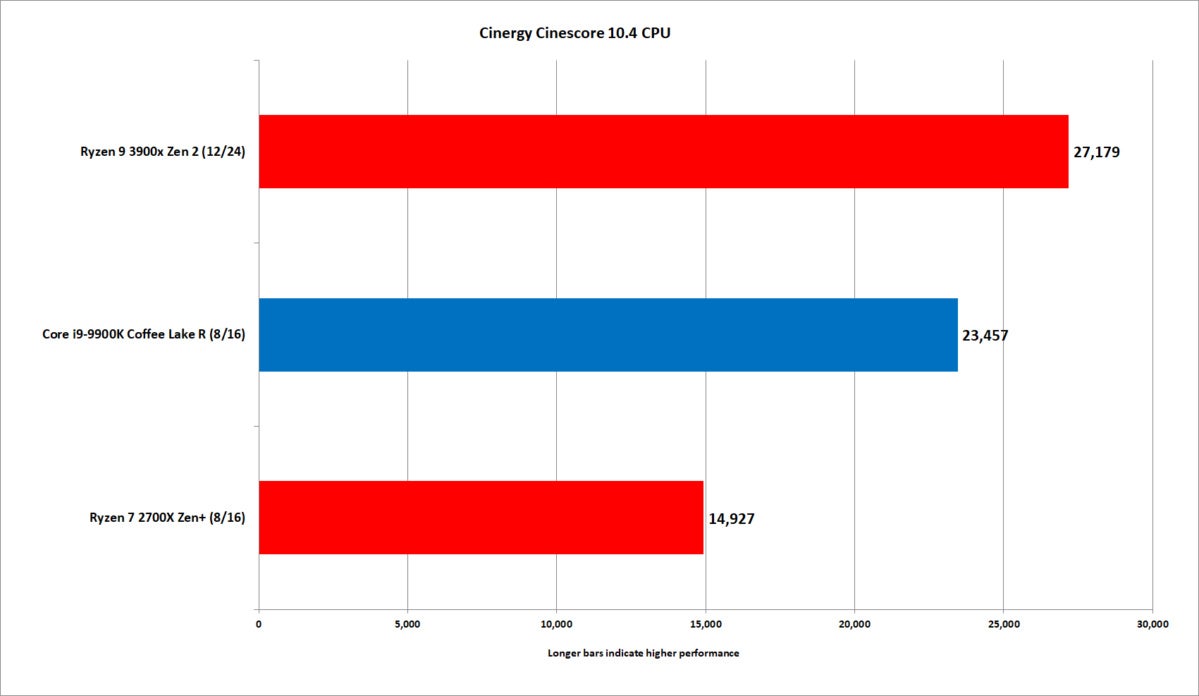

Our next test is new to us but doesn’t require the pain of installing a full video editing suite to run. Cinegy free Cinescore was created to give the broadcoast industry a quick and easy way to assess CPU and GPU performance. It runs tests from SD, to HD, UHD and 8K across both CPU and GPU. Cinescore also uses codecs as varied as XDCAM, MPEG2, H.264, H.265, DVCPro100, AVC_Intra as well Cinegy’s own “high-performance” Daniel2 codec.

Our machine configurations were the same for previous tests and all of the Cinescore results were obtained with Nvidia GeForce RTX 2080 Ti cards. We’re only reporting the composite score for SD, HD, UHD and 8K runs. As you can, there’s not much of a surprise and Ryzen 9 3900X again handily outruns the Core i9 chip.

IDG

IDG

Using Cinegy Cinescore 10.4, the Ryzen 9 continues to dominate the Core i9 chip.

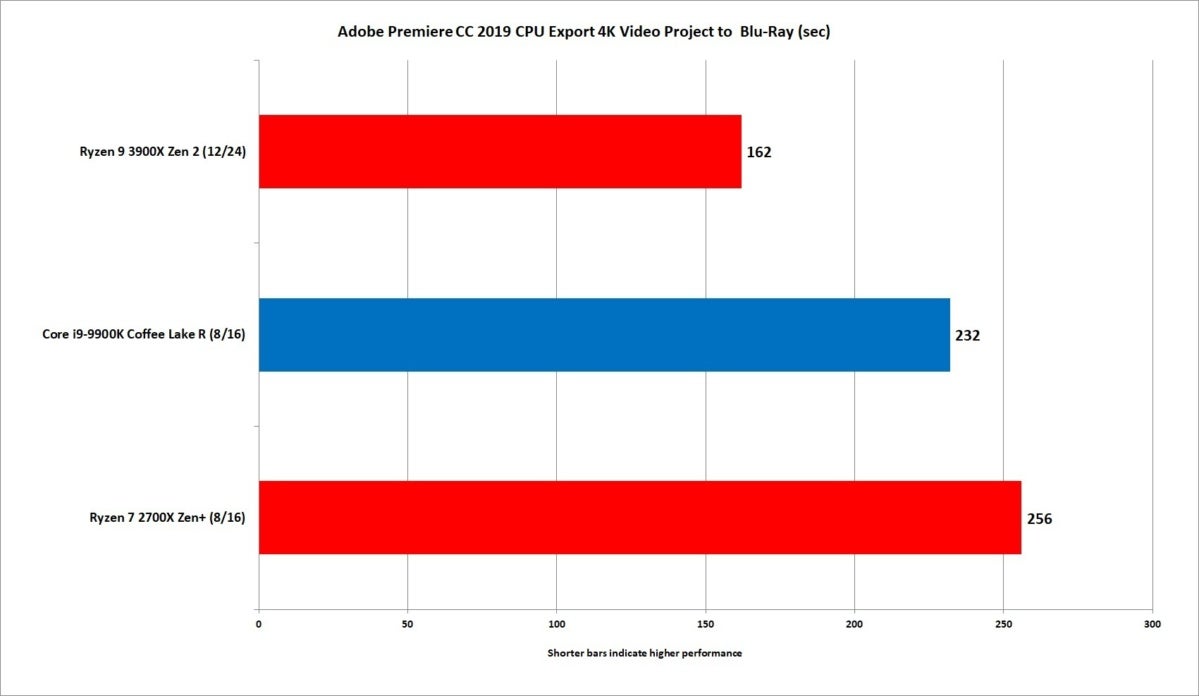

We’ll end our encoding test with Adobe’s popular Premiere CC 2019. For this test we use footage shot by PCWorld’s video department for a story on HP’s Chromebook 13. The video was shot at 4K resolution using a Sony Alpha A7 II, which was then edited for the video you can view here. For this test, we use the latest version of Premiere CC 2019 and export it using the Blu-ray preset using the Maximum Render quality flag. All three projects were read and written to the same Plextor M.2 SSD to remove storage as a variable in the test.

IDG

IDG

The Ryzen 9 3900X’s extra cores let it beat up on the Core i9 in Premiere CC 2019.

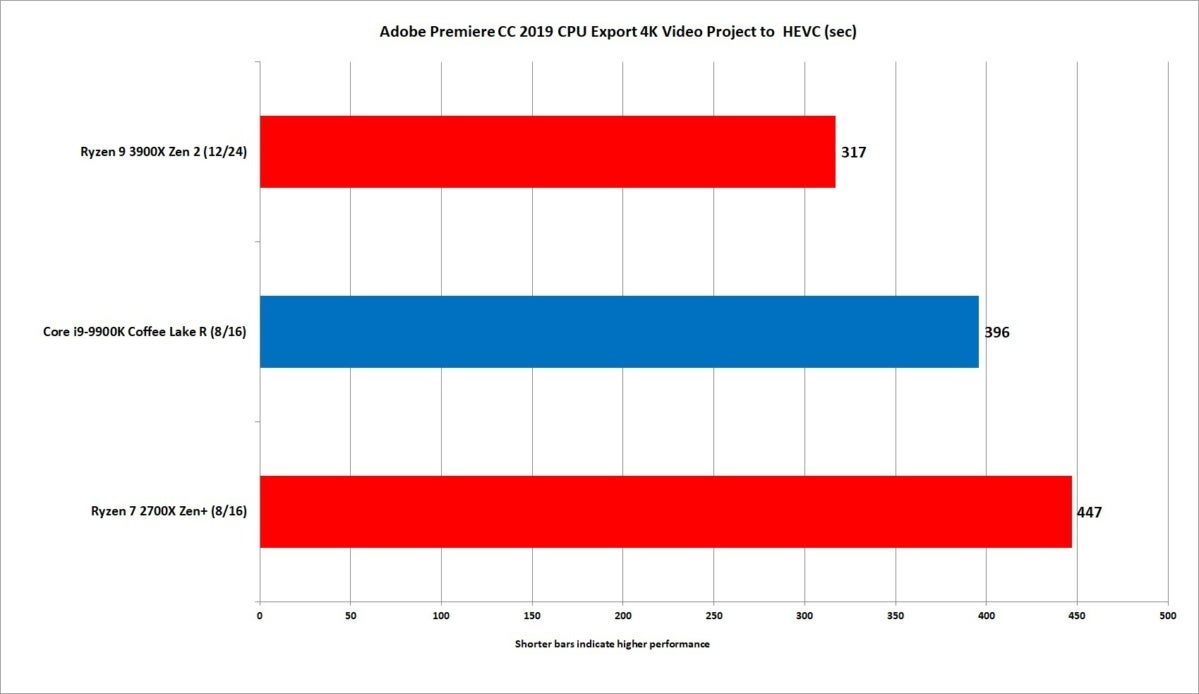

We exported video using HEVC as well. The Ryzen 9 3900X continues to blow by the Core i9, but the gap closes up a little. Still, a shorter bar means less time wasted no matter how you cut it.

IDG

IDG

We also exported our Premiere project using the Premiere’s HEVC encoder, which is licensed from MainConcept.

Ryzen 3000 Photoshop Performance

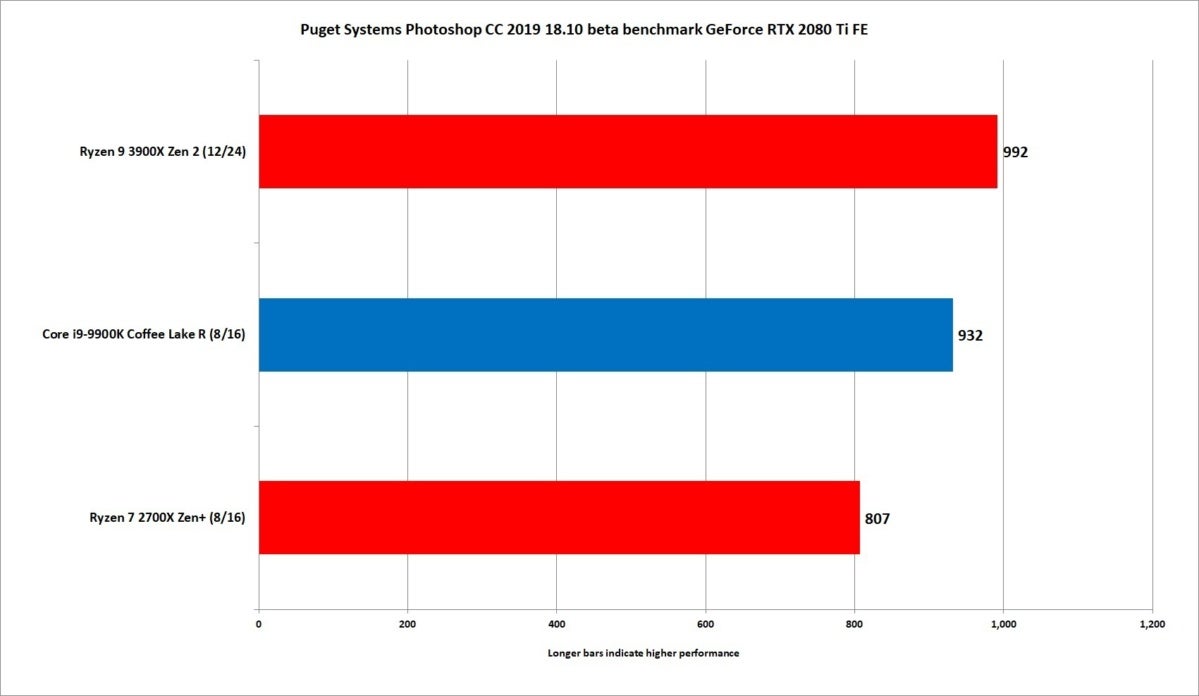

We don’t normally use Adobe’s popular Photoshop as a performance test because, well, you don’t normally net the same dividends you do with modelling or video encoding. It’s an application that’s mostly balanced on single-threaded performance.

With the increased IPC and slightly higher clocks of the Ryzen 9 3900X, we did want to see if it could hang with the mighty Core i9’s high clocks. For this test, we used Puget System’s free Photoshop benchmark script and selected the Photoshop Extended script run. The Ryzen 9 3900X’s score of 992 is very respectable and about 6 percent faster than the Core i9-9900K’s overall score of 932.

IDG

IDG

The performance gap is slimmer, but the Ryzen 9 3900X still wins.

Ryzen 3000 Compression tests

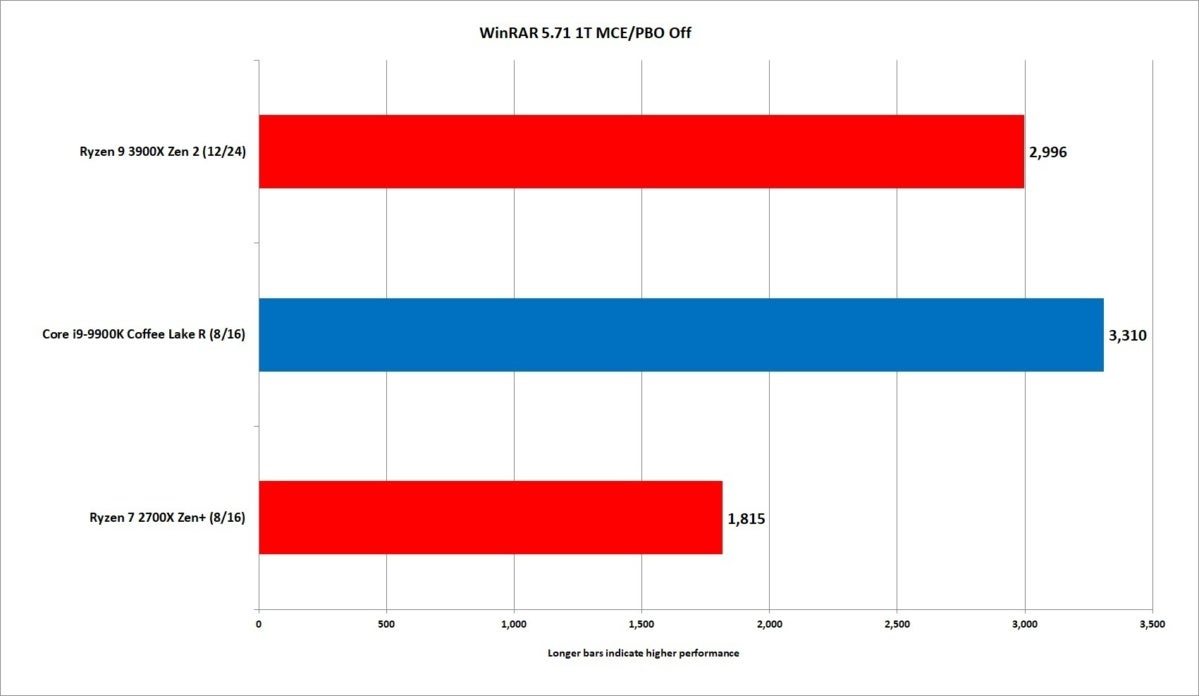

Moving on to compression, we’ll kick it off with RARlab’s WinRAR 5.71. The program features a built-in benchmark, which we first run in single-threaded mode. The results show a big boost for the new Ryzen 3000 chips over the Ryzen 2000 chips, as the new 4.6GHz boost Ryzen 9 3900X draws fairly close to the 5GHz Core i9-9900K.

IDG

IDG

The Ryzen 9 3900X actually offers up a very decent uplift over the previous Ryzen 7 2700X.

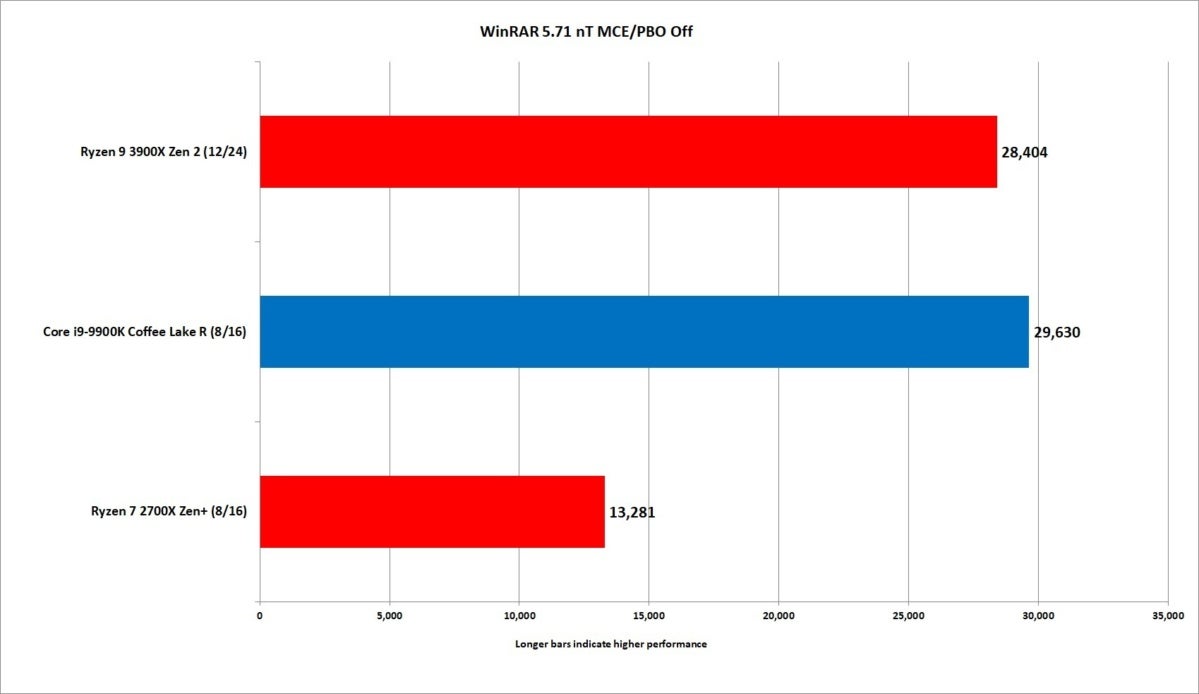

Things go sideways when we shift to multi-threaded performance. While the Ryzen 9 3900X actually turns in a decent result, it falls slightly behind the Core i9-9900K despite having four more cores.

We’ll note that WinRAR typically hasn’t liked Ryzen-based CPUs (nor Intel’s Skylake X chips either). This result is a pretty decent uptick for the Ryzen 9 3900X overall, just not the victory we expected after seeing how well the CPU performed elsewhere.

IDG

IDG

This WinRAR result can be seen as good or bad in some ways. WinRAR has traditionally favored Intel CPUs, as we can see from the dismal performance of the Ryzen 7 2700X. The fact that the Ryzen 9 3900X is just about tied with the Core i9 can be seen as an improvement.

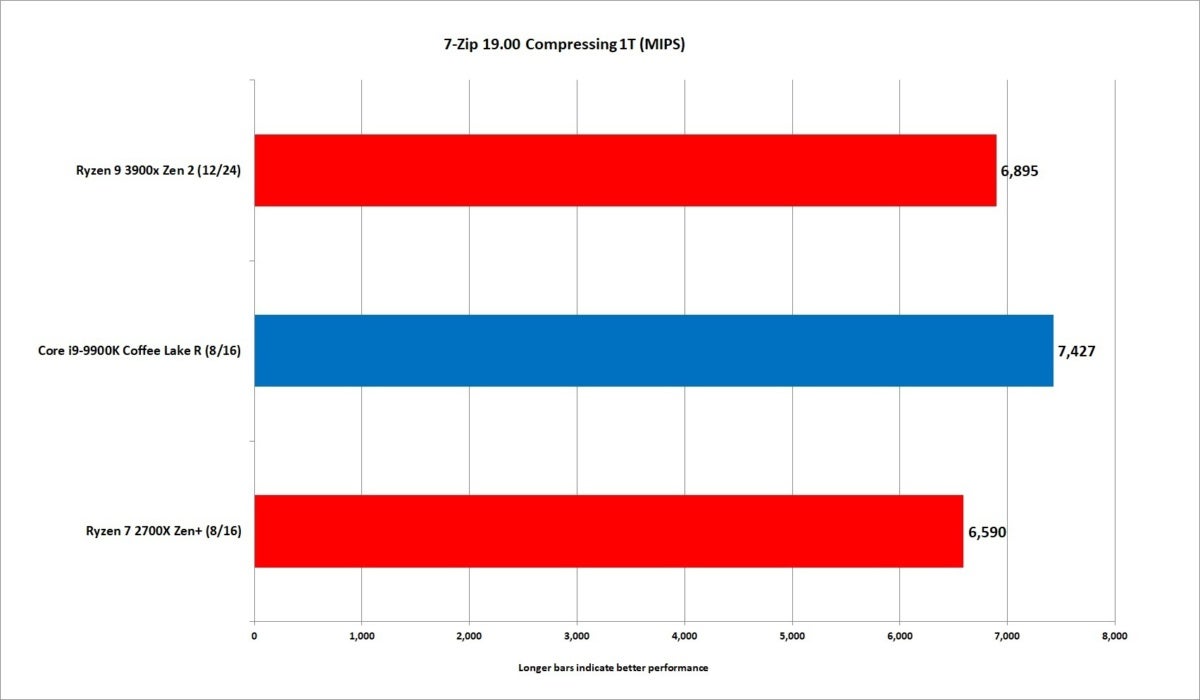

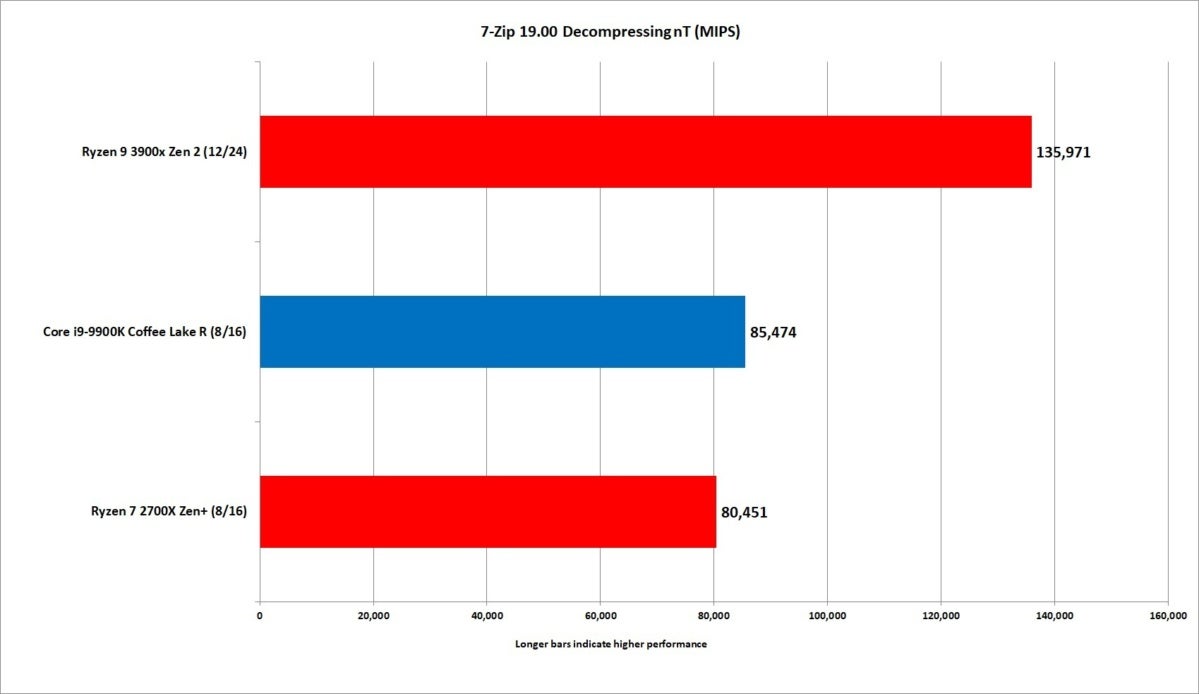

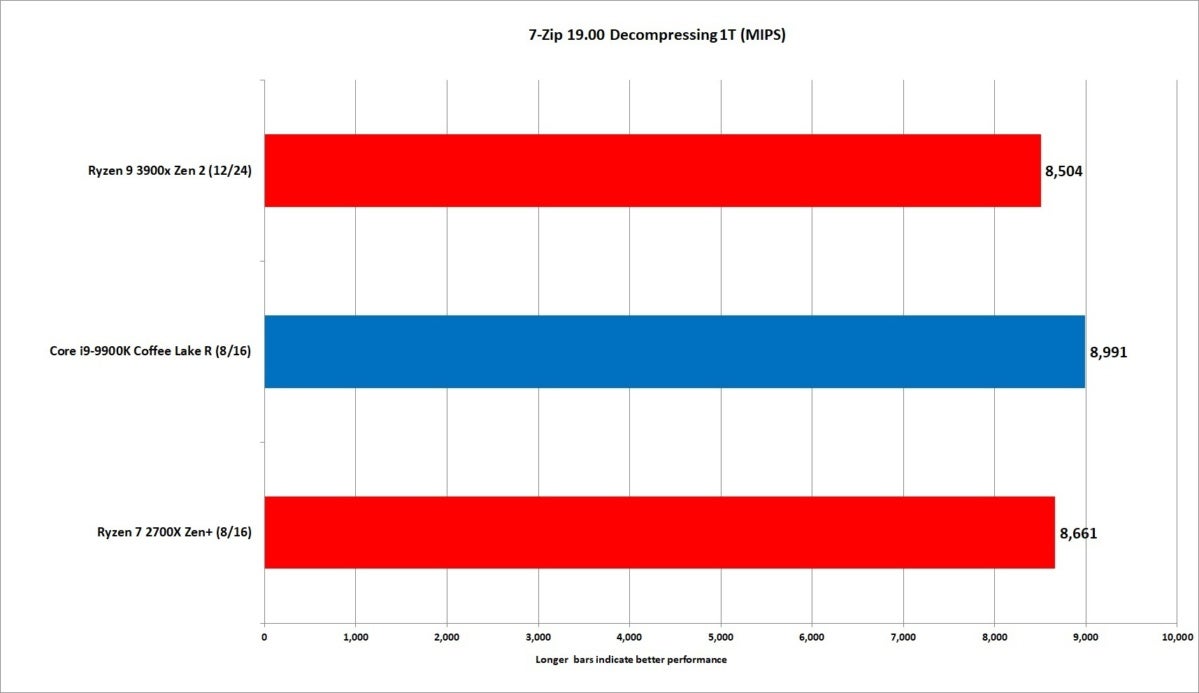

The good news for Ryzen 9 3900X is its performance in the far more popular 7Zip is pretty damn good.

The test reports compression performance and decompression performance. The app’s creator has said compression speed is mostly tilted to memory latency as well as data cache/size and speed performance. How a CPU can deal with out-of-order execution also helps.

IDG

IDG

The single-threaded performance in 7Zip slightly favors the Core i9 chip.

Decompression performance is largely reliant on integer performance and how well the CPU handles branch mispredictions.

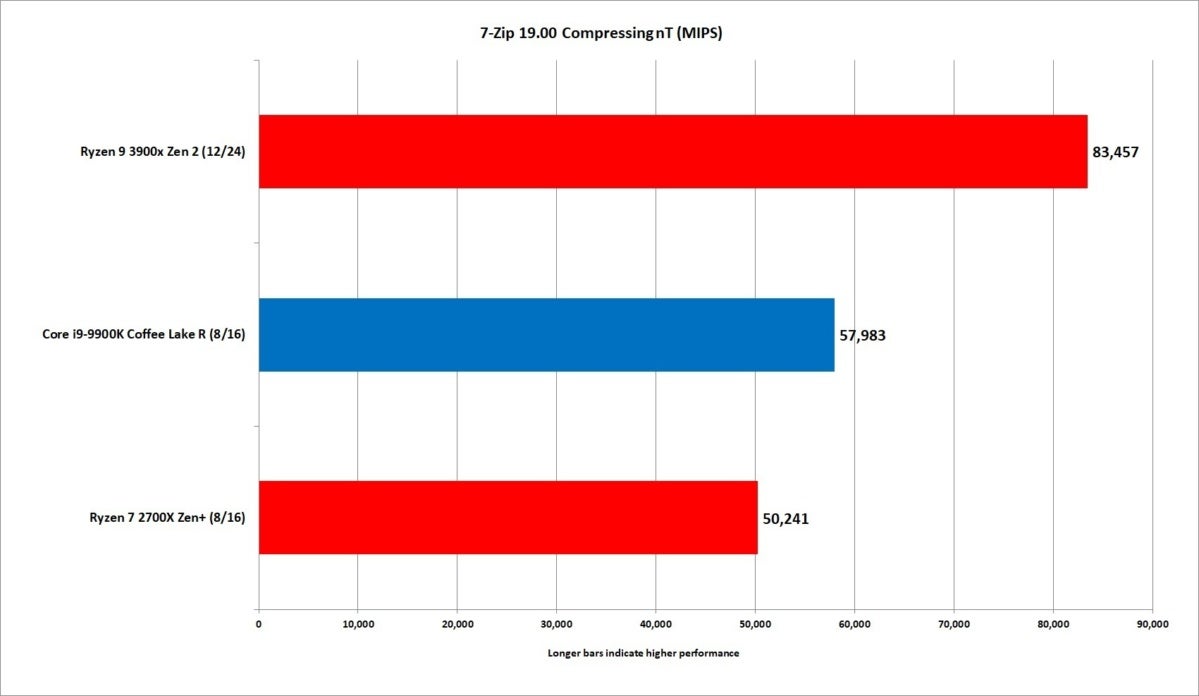

We run 7Zip 19.00 using the default dictionary size of 32MB. We also run the amount of threads equal to the CPU’s available threads, as well as testing single-thread.

In single-threaded performance, the 5GHz Core i9, with its roughly 8-percent clock advantage over the 4.6GHz Ryzen 9, yields about a 7.5-percent performance advantage in the compression portion. In multi-threaded performance, we see the Ryzen 9 gain a 36-percent advantage over the Core i9.

IDG

IDG

Multi-threaded performance sees the Ryzen 9 dominate easily.

Moving over to the decompression test, the gap between the Core i9 and Ryzen 9 closes to 5 percent in favor of the Intel CPU. When you factor in all of the CPU cores available, the Ryzen 9 sprints past to the Core i9 by a whopping 46 percent.

IDG

IDG

Decompressing is traditionally greatly limited by integer performance and how good a CPU can handle branch misprediction.

While the Core i9 has a single-digit advantage in single-threaded tasks, we have to say the Ryzen 9 is so close it doesn’t matter. When you open it up to all cores—it’s an epic beating.

IDG

IDG

In integer performance, the results indicate single-threaded performance is nearly a tie between all three, with the Core i9 squeaking out ahead.

Keep reading for game performance testing

Ryzen 9 3900X Gaming Performance

It’s pretty much been all sunshine and roses for the Ryzen 9 3900X so far. The final question is its gaming performance. Ever since the original Ryzen 7 1800X launch, gaming, especially at lower resolutions where the game isn’t bottlenecked by the GPU, has been nothing but controversy. It’s also been the one shining area for Intel.

As we said earlier, for our tests, we used GeForce GTX 1080 FE cards and also RTX 2080 Ti cards. We did our primary testing at 1920×1080 resolution and also tested at 2560×1440. To save you space, we pretty much omitted the results at 2560×1440 resolution because, well, you don’t want to look at a bunch of results where they’re all nearly the same. Even in tests where the Ryzen 7 2700X might fall behind, it’s not really that big of a deal in GPU-limited gaming.

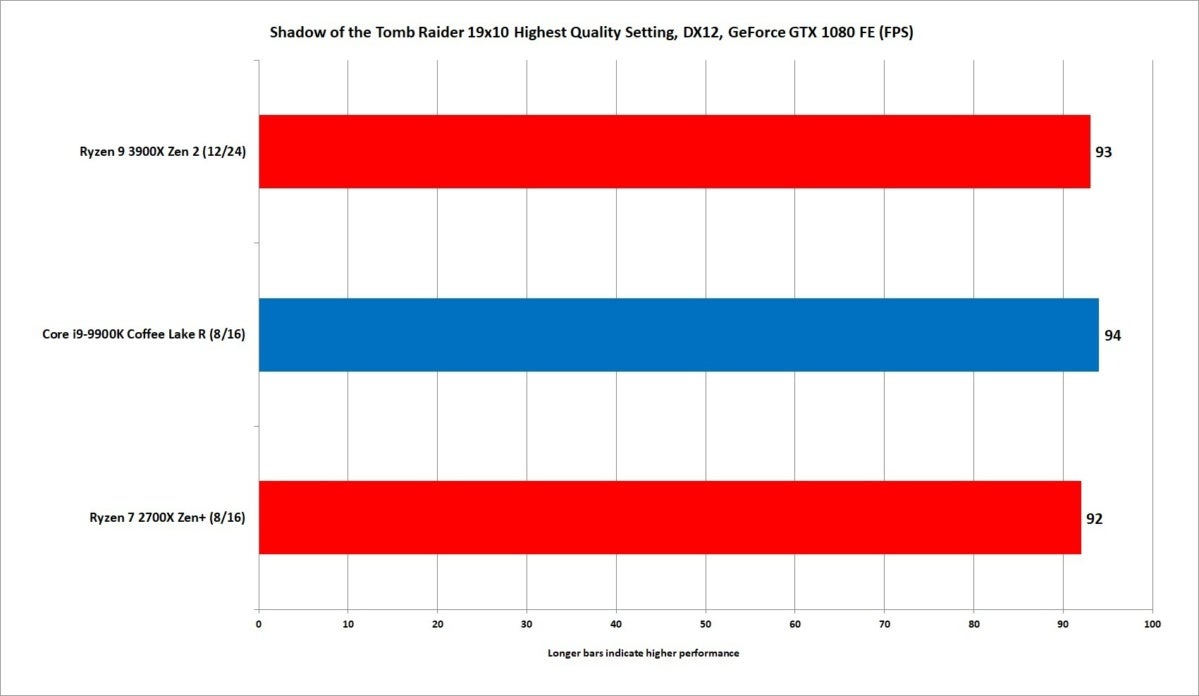

First up is Shadows of the Tomb Raider, which we run on the GeForce GTX 1080 FE card using the Highest Quality settting. As you can see, it’s a tie! Win right? Well, not really. The real issue is using the Highest Quality setting is enough to make the now ancient GeForce GTX 1080 FE seem downright slow. It’s the bottleneck with these CPUs.

IDG

IDG

As you can see, Shadows of the Tomb Raider, even at 1920×1080 resolution, is bottlenecked by the GPU.

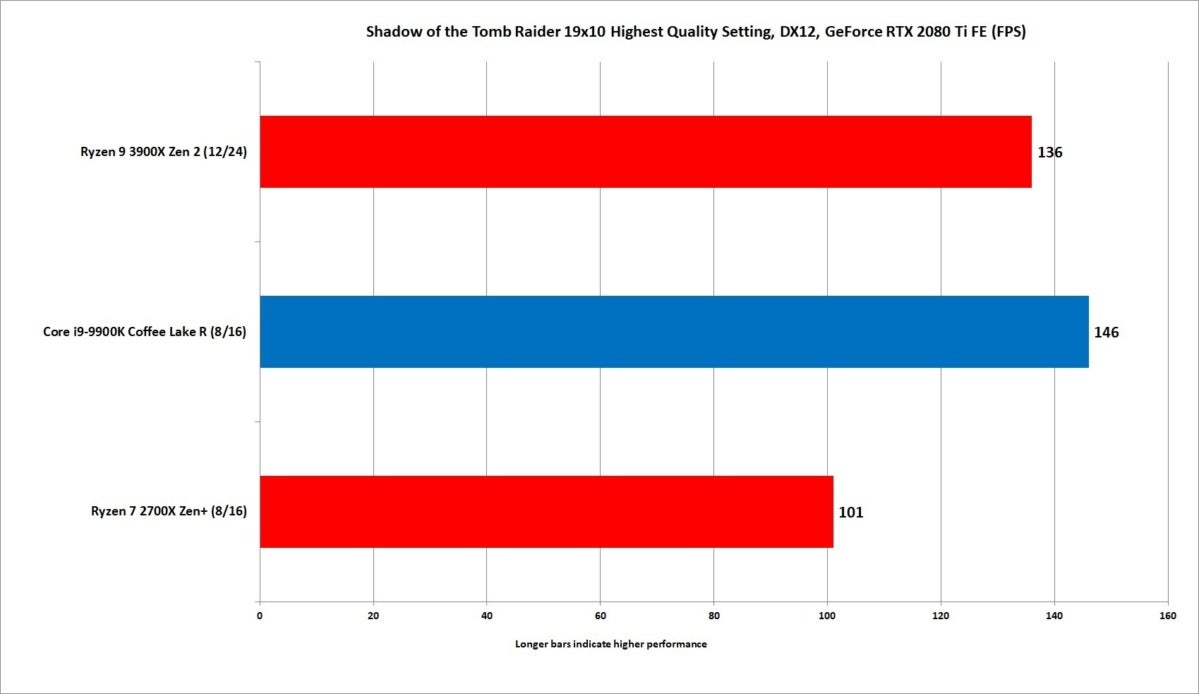

You can see why the minute we swap in the mean, lean GeForce RTX 2080 Ti FE: The Ryzen 7 2700X immediately falls to a distant third place. But how does that Ryzen 9 3900X do? We’d have to say pretty good,as it’s about 7 percent slower than the Core i9-9900K (that has a 8-percent higher clock speed.) It’s certainly better than the Ryzen 7 2700X, which exhibits the same problems with 1080p gaming that the original Ryzen 7 1800X suffered as well.

IDG

IDG

Playing modern games with the new Ryzen 9 or Core i9 needs a fast GPU too.

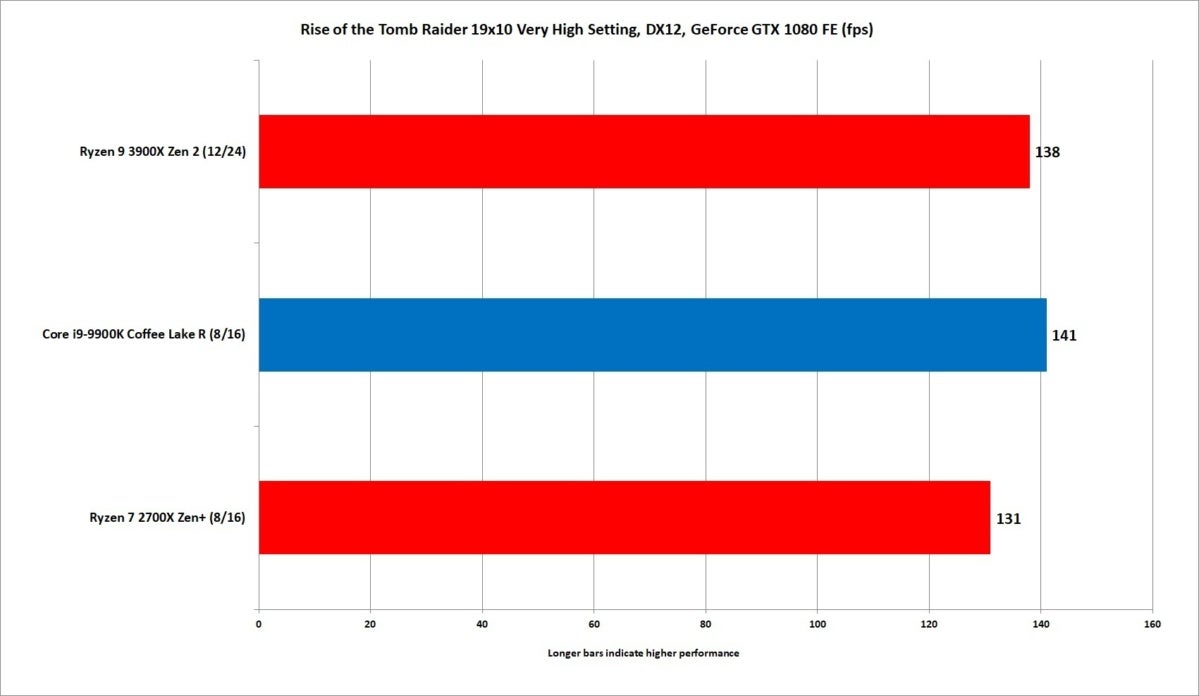

Moving on to the slightly older Rise of the Tomb Raider, we again see just how a “slow” card like the old GeForce GTX 1080 FE is the bottleneck in RoTR. It’s basically a three-way tie on the 1080, and you’d likely see that on any card short of an RTX 2080 and up.

IDG

IDG

The GeForce GTX 1080 FE is the bottleneck here on gaming.

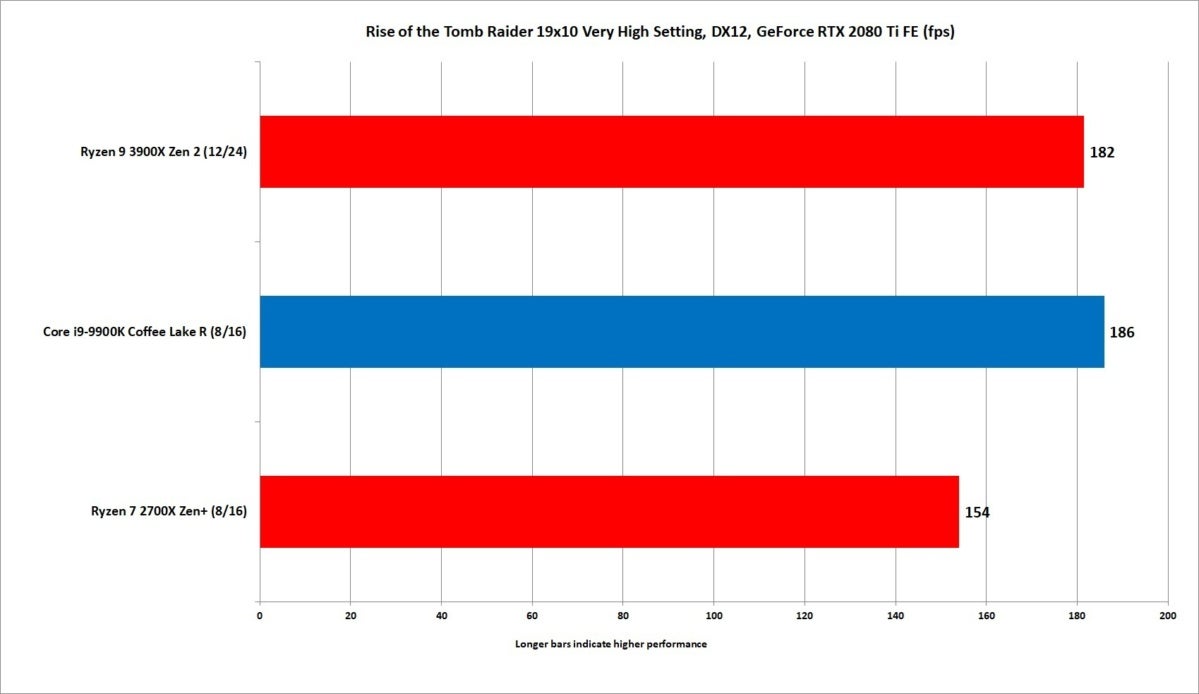

Fortunately, Nvidia makes that handy $1,200 GeForce RTX 2080 Ti card, which still slightly favors the Core i9 but only by about 2 percent. The Ryzen 7 2700X can’t say the same as it trails by the familiar amount we’re used to with older Ryzen CPUs against Core.

IDG

IDG

The Ryzen 9 3900X doesn’t beat the Core i9, but it’s pretty close.

If you’re here to see the Ryzen 9 3900X leave the Core i9-9900K eating its dust in gaming benchmarks, prepare to be disappointed. For the most part, in all of the games we tested, the Ryzen 9 3900X generally trailed by single-digit ranges of 1 percent to 7 percent in most games using that wicked GeForce RTX 2080 Ti FE.

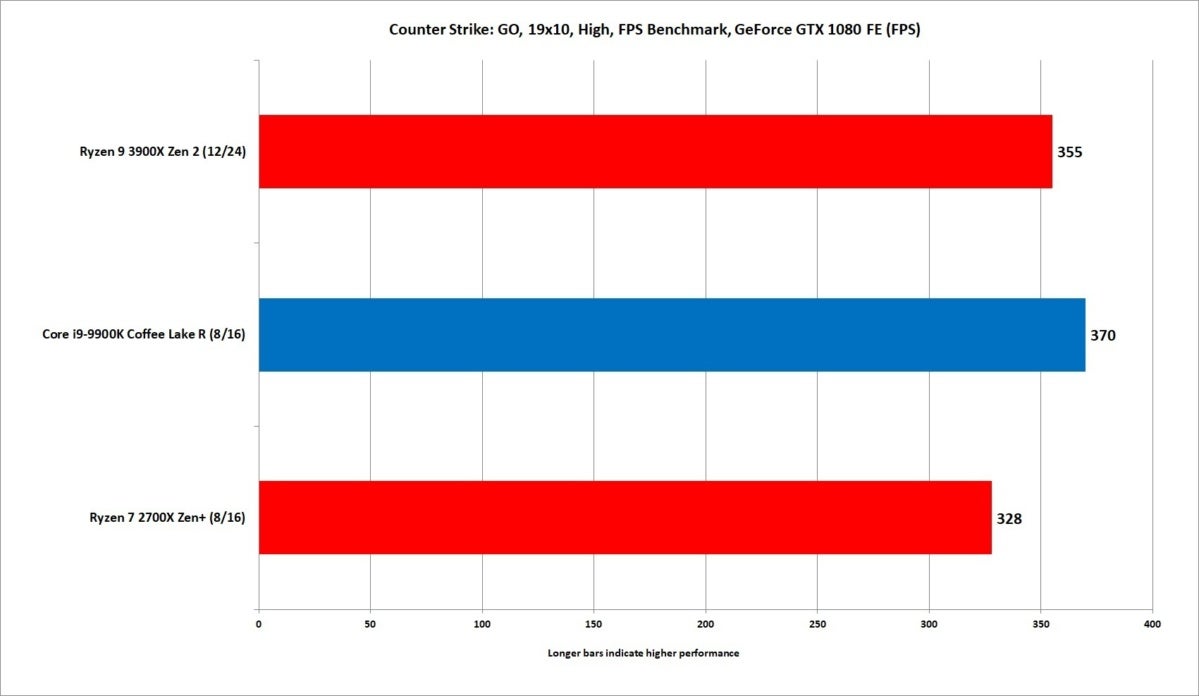

IDG

IDG

The ancient CS:Go is a game we only needed to break out the GTX 1080 FE for. It’s not like you need more than 300 fps.

Ryzen fans shouldn’t take that as a failure. In many ways, we see it as a win for Team Red. Remember: The two previous generations of Ryzens have trailed Core i7 and Core i9 by double digits in the vast majority of games at 1080p resolution. To see the Ryzen 9 close to striking distance in just about every game we ran is a major upgrade. That also means you will occasionally hit games where the Ryzen 9 3900X flops against Core i9.

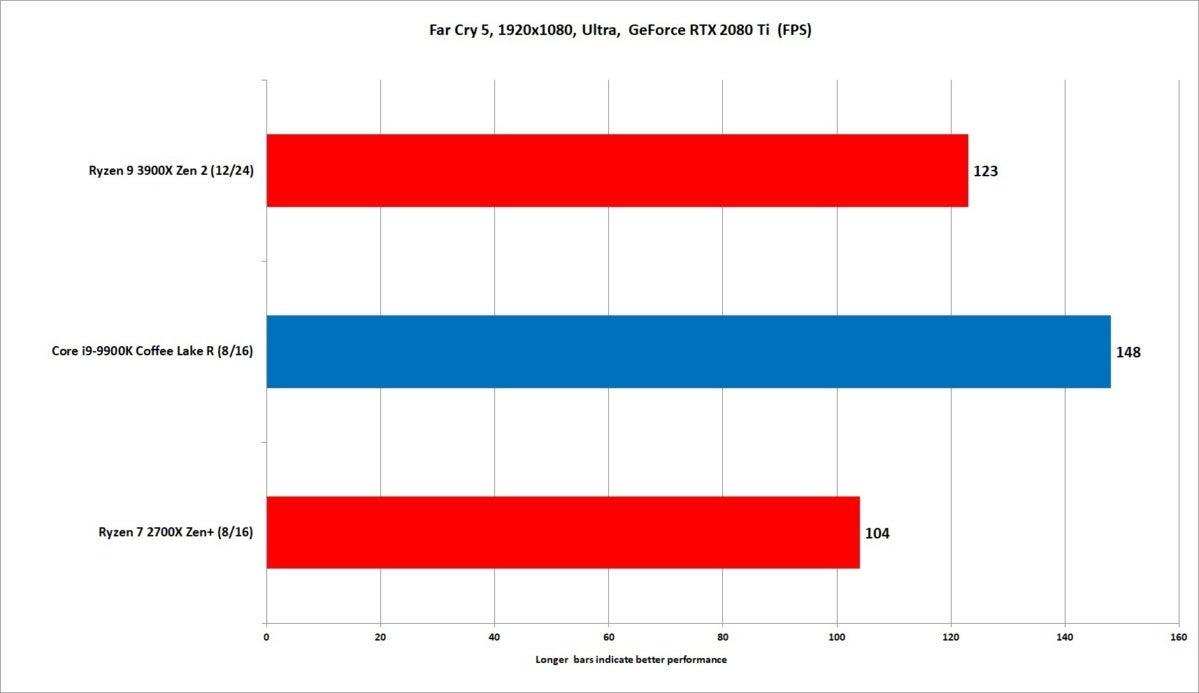

In Far Cry 5, we actually saw a fairly big gap of about 15 percent between the Ryzen 9 and Core i9. Of the games we tested, Far Cry 5 coughed up the largest gap but lest you think the situation isn’t improved over 2nd gen Ryzen much that’s not true. As you can see, the Ryzen 7 2700X sits even further back than the Ryzen 9 2900X.

(In our original review, we were unable to run Far Cry 5 the Ryzen 7 2700X due to game activation limitations with our legally licensed copy. We’ve since updated results with Far Cry 5 and Deus Ex: Mankind Divided.

IDG

IDG

Far Cry 5 is one of the titles that Core i9 heavily leads the way on.

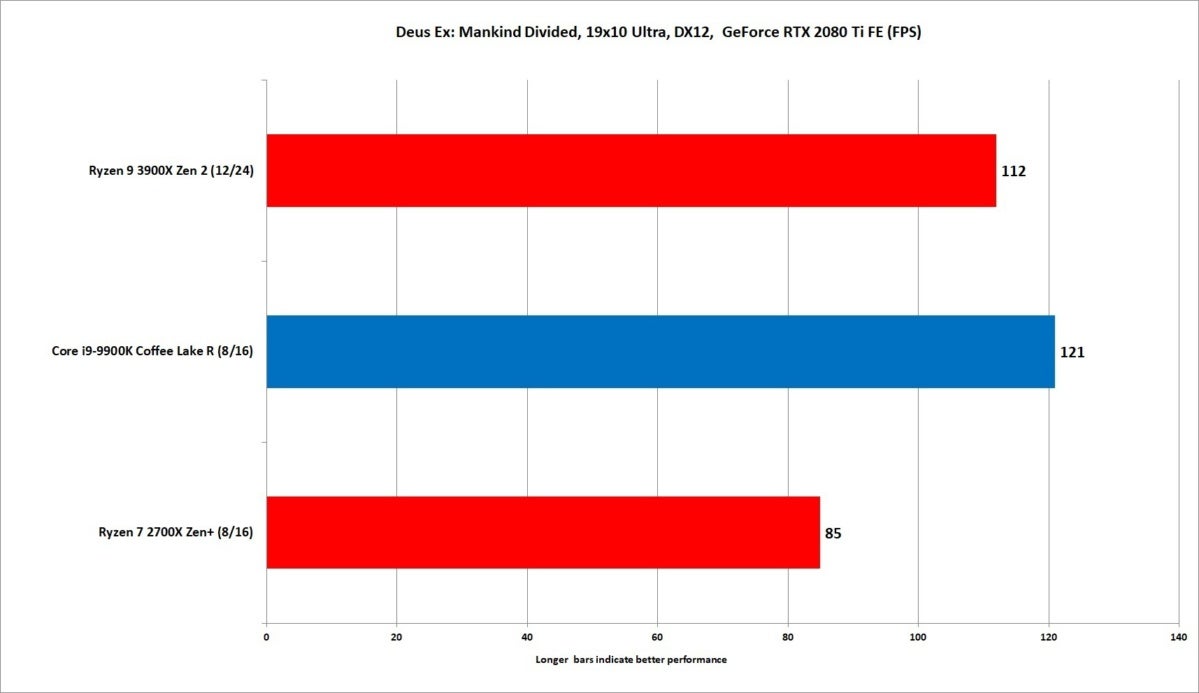

That graphic above might give you a heartache, but most of the games looked like Deus Ex: Machina, which gave the Core i9 about a 7-percent lead over the Ryzen 9. (Yes, the copy protection for Deus Ex gave up the ghost during our test runs too, so the Ryzen 7 2700X was not tested with the RTX card.)

We ran the popular game Rainbow Six Siege with similar results. Sure, Ryzen 9 is in second place, but not by much. Compare its results to the Ryzen 7 2700X.

IDG

IDG

Yes, Ryzen gaming has improved. One look at the performance of Deux Ex: Mankind Divided between the two Ryzen chips.

If we had to call a victor based solely on gaming, we’d give it to the Core i9. But the slim victory makes it hardly a victory at all, because even the Core i9’s wins are by such small margins—certainly far smaller than against any older Zen or Zen+ CPU. And we have to move all the way to a $1,200 graphics card to see the separation. We really think anything below an RTX 2080 will likely make it nearly impossible to tell the difference between the two CPUs at 1920×1080.

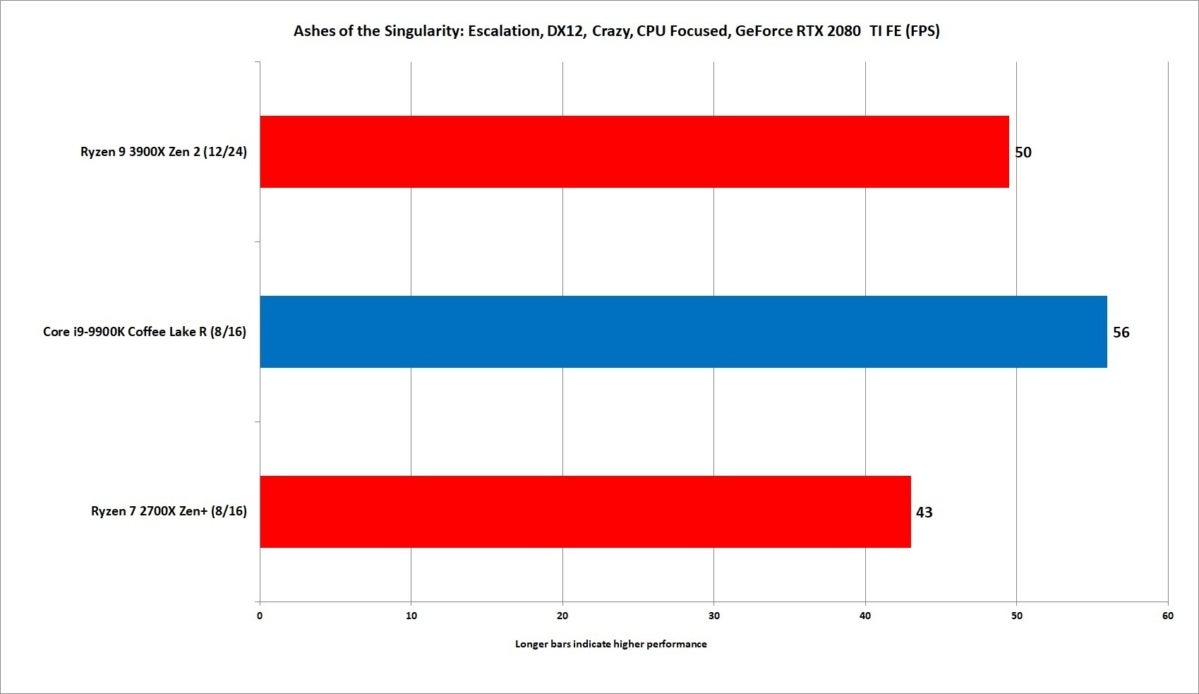

IDG

IDG

The CPU Focused Test in Ashes is indeed a CPU test, because we barely saw frame rates budge going from a GeForce GTX 1080 to GeForce RTX 2080 Ti.

Conclusion

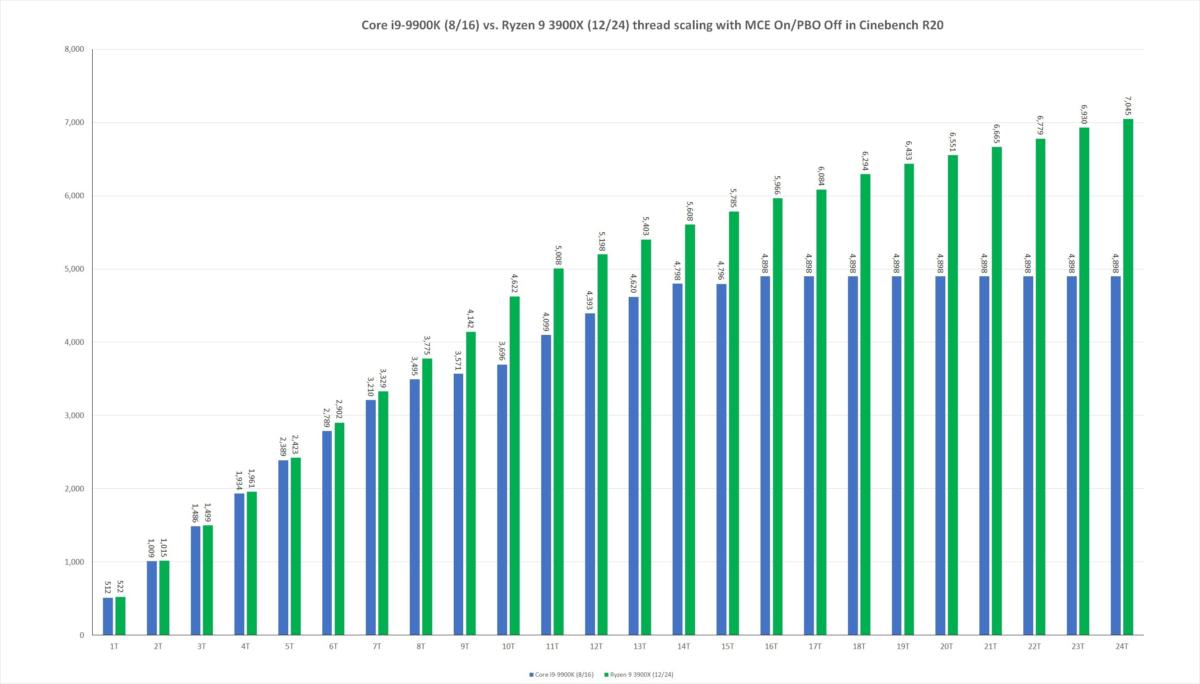

To close off our review, we like to run Cinebench using from 1 thread to 24 threads. Cinebench R20 is a 3D modeling benchmark that doesn’t predict gaming performance or other application performance, but a lot of games and applications just can’t take advantage of all the threads in modern CPUs. That’s why Cinebench R20 has value in demonstrating performance when the CPU is loaded up from 1 thread and up.

On the chart below, AMD typically dominates the right side of the chart, where it almost always has an advantage in the number of cores over Intel chips.

Intel, on the other hand, typically loses on the right side but wins on the left side, because it usually has a clock speed and IPC advantage over AMD chips. That’s basically given Intel’s Core chips their only edge, because the vast majority of applications and games rely on performance on the left side of our chart. Well, if you look at our chart today between the Ryzen 9 3900X and the Core i9-9900K, that last reason is essentially gone now.

IDG

IDG

When you run Cinebench R20 using 1 thread to 24 threads, we can see the Ryzen 9 3900X’s real strength as it gives up no quarter to the mighty Core i9.

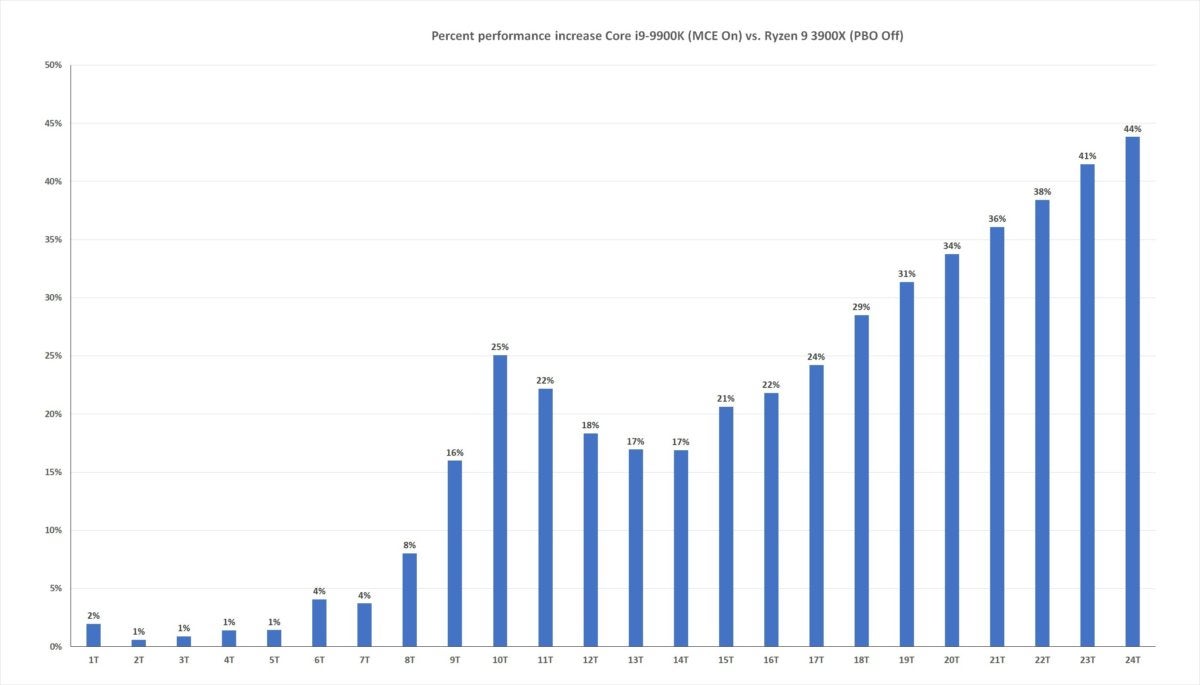

For another view of the same data, we’ve whipped up a chart that shows the performance advantage as a percentage. As you can see, the simple math of 12 cores > 8 cores wins big time.

The worst news for Intel’s Core i9 is once again on the left side of the chart. There’s nothing left here. The two are are a dead tie up to six threads, where the Ryzen 9 takes over.

IDG

IDG

The Ryzen 9 is basically dead even with the Core i9 when loaded up to 8 threads. After that, the 12-core chip pulls away at warp speed from the 8-core chip.

On those low-thread-count loads, the Ryzen 9 3900X is every bit as fast as the Core i9-9900K. This basically means there are very few reasons left to buy a Core i9 today. The reasons left are real, but for probably 9 out of 10 consumers looking at a high-end CPU, they’ll want to buy the Ryzen 9 3900X.