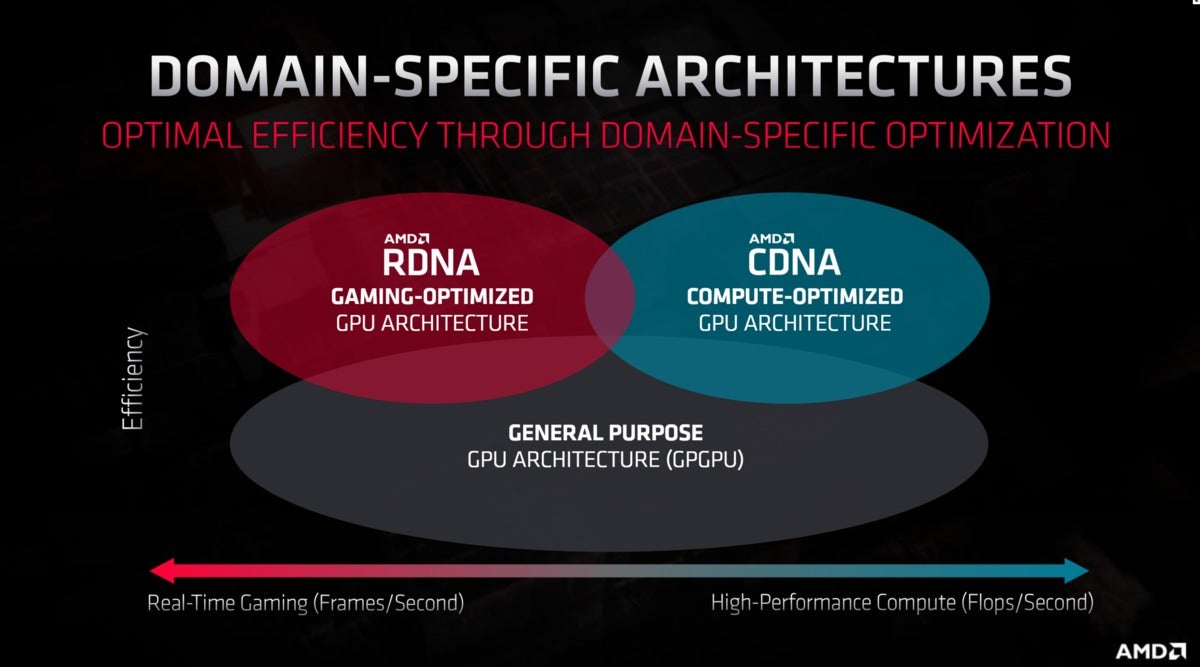

AMD gave us a glimpse of its future this week, divulging new information about its Radeon and Ryzen roadmaps during the company’s Financial Analyst Day. Lots of new information was disseminated, but as PCWorld’s resident GPU expert, the part that jumped out at me was the introduction of “CDNA,” a new, compute-specific graphics architecture built for data centers. No, not because I’ve suddenly become interested in crunching numbers, but because sharing a singular architecture for consumers and workstations gave Radeon graphics cards some notable benefits—and drawbacks—over the years.

What will CDNA’s debut mean for the “RDNA”-based consumer Radeon graphics cards that PC gamers buy? It’s too soon to tell for sure, but we can dig into some of the potential ramifications.

One arch to rule them all

Traditionally, each generation of AMD’s various Radeon graphics offerings revolved around the same underlying architecture. Radeon RX Vega graphics cards, the radical Radeon Pro SSG, and Radeon Instinct server accelerators all used AMD’s Vega GPUs, for example, though the software and warranties available for each differ. By comparison, Nvidia creates different GPU configurations for its data center and consumer graphics products.

Brad Chacos/IDG

Brad Chacos/IDG

AMD used its HBM-enhanced Radeon Vega GPUs in consumer cards, workstation cards, and server accelerators.

Using a single architecture helped AMD compete in several arenas—consumer, workstations, and data centers—without having to spend a fortune developing bespoke solutions for each. Creating GPUs is very expensive. Frankly, AMD might not have been able to afford creating multiple concurrent GPU architectures before, as the company was bleeding cash and almost (almost) on its last legs before it rose from the ashes on the back of Ryzen. Cutting the same pie into several pieces was prudent. It also came with both advantages and disadvantages.

On the negative side, reusing the same GPU architecture everywhere meant that AMD’s various Radeon GPU products were often the jack of all trades, but master of none. While Nvidia crafted Tesla GPUs perfectly tailored for data center precision, Radeon Instinct cards were saddled with some hardware better suited for rendering games, while Radeon graphics cards were infused with some internals better suited for compute tasks.

AMD

AMDThat’s not all bad though. Remember how coveted Radeon graphics cards were during the Bitcoin mining boom a couple of years back? You can thank those data-centric bits. Likewise, AMD’s heavy push towards async compute leveraged those capabilities, and helped spark the rise of technologies such as DirectX 12 and Vulkan on the PC. AMD had a leg-up on those workloads for half a decade. Nvidia only caught up to AMD’s async compute capabilities with the Turing GPU architecture inside RTX 20-series graphics cards.

The right tool for the right job

That’s chaning though. With RDNA for consumer graphics cards and CDNA for compute tasks, AMD’s finally heading down the path blazed by Nvidia, and all Radeon graphics products should get better in the process.

“[CNDA] is a good move as datacenter GPUs don’t need many of the features a consumer graphics card needs,” Patrick Moorhead, principal analyst of Moor Insights & Strategy (and former AMD VP) said in an emailed statement. “This includes elements like display and pixel rendering engines, and ray tracing. This means AMD can save cost by removing those elements and add more gates that help datacenter performance like tensor OPS. AMD hadn’t done it until today because it couldn’t afford to have two architectures.”

Nvidia

Nvidia

Tesla GPUs lack video ports, but Nvidia wouldn’t ship a GeForce graphics card without them.

Cutting all those game-rendering bits out means AMD can devote more space in CDNA GPUs towards hardware that accelerates its central task: Compute workloads. Nvidia’s Tesla GPUs, for example, are much better at FP16 “Double precision” tasks than GeForce graphics cards, which greatly enhances their performance in machine learning. Tesla cards don’t even pack video outputs. AMD’s CDNA hardware could follow suit.

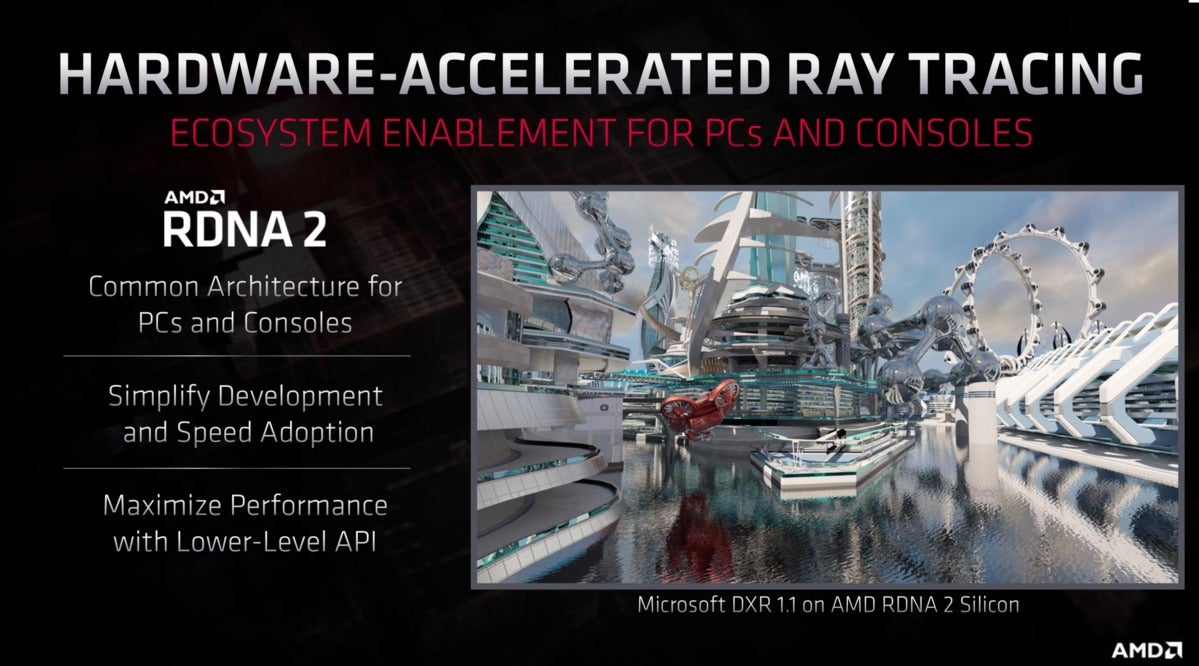

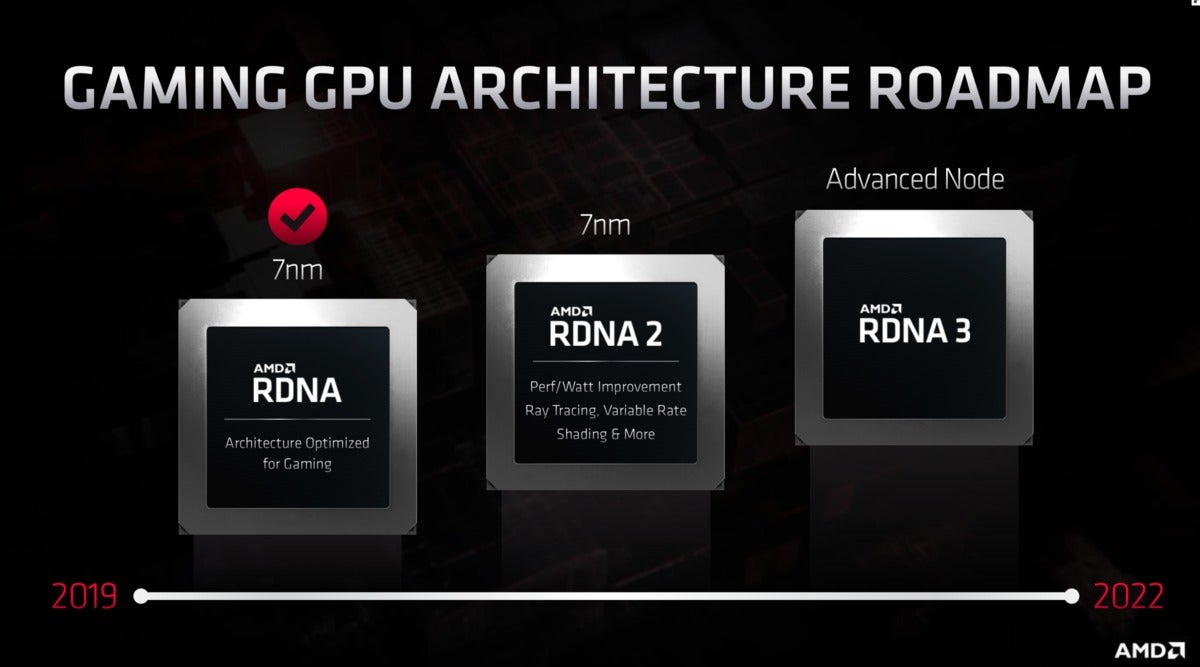

Conversely, dumping some of the more compute-centric hardware from Radeon GPUs could help AMD optimize its desktop and laptop graphics cards for greater gaming performance. Graphics cards based on the RDNA2 architecture will land on PCs in 2020, promising real-time ray tracing, a massive 50 percent improvement in performance-per-watt over first-gen “Navi” RDNA GPUs, and a return to high-end gaming with “Big Navi” in some shape and form.

AMD

AMD

Ray tracing is coming with RDNA2, but that doesn’t necessarily mean it’s coming to CDNA.

“By removing datacenter features, AMD can add more gaming features,” Moorhead told me via email. “For instance, if 10 percent of RDNA silicon were datacenter-specific (like virtualization), AMD has 10 percent more die area it can add for gaming-only features, all things equal. That 10 percent could be more ray tracing features, more shader compute units, etc.”

AMD hasn’t been able to come close to Nvidia’s vaunted GeForce RTX 2080 Ti thus far, much less whatever its successor will be if Nvidia’s rumored next-gen “Ampere” GPUs launch later this year. That said, if introducing CDNA lets AMD rip a bunch of superfluous compute hardware out of RDNA2 GPUs and fill that space with more gamer-centric goodies, we could be looking at a drastic overhaul of AMD’s consumer Radeon graphics cards, creating a new-look beast much more potent than before—especially if the company’s able to hit those 50 percent performance-per-watt increases it teased at its Financial Analyst Day.

AMD’s current RDNA Radeon RX 5700 series graphics cards own the 1440p gaming crown despite using a shared architecture with data center GPUs. If AMD’s able to infuse even more gamer tech into its successor, while reducing power draw? Wooooo boy.

AMD

AMDDon’t get hyped yet, but do keep your fingers crossed. We’re just speculating here, remember. AMD hasn’t said that the introduction of CDNA will materially change the design of consumer RDNA graphics cards, much less that RDNA2 GPUs will be affected even if so. It could take years for CDNA and RDNA to substantially diverge. With both CDNA and CDNA 2 planned to launch before 2022, however, we’d doubt it.

Next-gen RDNA2 graphics cards will launch before the end of the year, AMD said, which likely means closer to the end of the year. In the meantime, be sure to check out our coverage of all the announcements at AMD’s Financial Analyst Day, and what Microsoft’s recent Xbox Series X teases tell us about RDNA2-based Radeon graphics cards.